6.27: Error-Correcting Codes - Hamming Distance

- Page ID

- 1879

- So-called linear codes create error-correction bits by combining the data bits linearly. Topics discussed include generator matrices and the Hamming distance.

So-called linear codes create error-correction bits by combining the data bits linearly. The phrase "linear combination" means here single-bit binary arithmetic.

\[0\oplus 0=0\; \; \; \; \; 1\oplus 1=0\; \; \; \; \; 0\oplus 1=1\; \; \; \; \; 1\oplus 0=1 \nonumber \]

\[0\odot 0=0\; \; \; \; \; 1\odot 1=1\; \; \; \; \; 0\odot 1=0\; \; \; \; \; 1\odot 0=0 \nonumber \]

For example, let's consider the specific (3, 1) error correction code described by the following coding table and, more concisely, by the succeeding matrix expression.

\[c(1)=b(1) \nonumber \]

\[c(2)=b(1) \nonumber \]

\[c(3)=b(1) \nonumber \]

or

\[c=Gb \nonumber \]

where

\[G=\begin{pmatrix} 1\\ 1\\ 1 \end{pmatrix} \nonumber \]

\[c=\begin{pmatrix} c(1)\\ c(2)\\ c(3) \end{pmatrix} \nonumber \]

\[b=b(b(1)) \nonumber \]

The length-K (in this simple example K=1) block of data bits is represented by the vector b, and the length-N output block of the channel coder, known as a codeword, by c.

The generator matrix G defines all block-oriented linear channel coders.

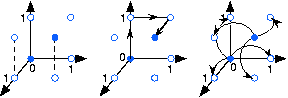

As we consider other block codes, the simple idea of the decoder taking a majority vote of the received bits won't generalize easily. We need a broader view that takes into account the distance between codewords. A length-N codeword means that the receiver must decide among the 2N possible datawords to select which of the 2K codewords was actually transmitted. As shown in Figure 6.27.1 below, we can think of the datawords geometrically. We define the Hamming distance between binary datawords c1 and

\[d(c_{1},c_{2})=sum(c_{1}\oplus c_{2}) \nonumber \]

Show that adding the error vector col[1,0,...,0] to a codeword flips the codeword's leading bit and leaves the rest unaffected.

Solution

In binary arithmetic as shown above, adding 0 to a binary value results in that binary value while adding 1 results in the opposite binary value.

The probability of one bit being flipped anywhere in a codeword is

\[Np_{e}(1-p_{e})^{N-1} \nonumber \]

The number of errors the channel introduces equals the number of ones in e; the probability of any particular error vector decreases with the number of errors.

To perform decoding when errors occur, we want to find the codeword (one of the filled circles in Figure 6.27.1) that has the highest probability of occurring: the one closest to the one received. Note that if a dataword lies a distance of 1 from two codewords, it is impossible to determine which codeword was actually sent. This criterion means that if any two codewords are two bits apart, then the code cannot correct the channel-induced error. Thus, to have a code that can correct all single-bit errors, codewords must have a minimum separation of three. Our repetition code has this property.

Introducing code bits increases the probability that any bit arrives in error (because bit interval durations decrease). However, using a well-designed error-correcting code corrects bit reception errors. Do we win or lose by using an error-correcting code? The answer is that we can win if the code is well-designed. The (3,1) repetition code demonstrates that we can lose ([link]). To develop good channel coding, we need to develop first a general framework for channel codes and discover what it takes for a code to be maximally efficient: Correct as many errors as possible using the fewest error correction bits as possible (making the efficiency K/N as large as possible.) We also need a systematic way of finding the codeword closest to any received dataword. A much better code than our (3,1) repetition code is the following (7,4) code.

\[c(1)=b(1) \nonumber \]

\[c(2)=b(2) \nonumber \]

\[c(3)=b(3) \nonumber \]

\[c(4)=b(4) \nonumber \]

\[c(5)=b(1)\oplus b(2)\oplus b(3) \nonumber \]

\[c(6)=b(2)\oplus b(3)\oplus b(4) \nonumber \]

\[c(7)=b(1)\oplus b(2)\oplus b(4) \nonumber \]

where the generator matrix is

\[G=\begin{pmatrix} 1 & 0 & 0 & 0\\ 0 & 1 & 0 & 0\\ 0 & 0 & 1 & 0\\ 0 & 0 & 0 & 1\\ 1 & 1 & 1 & 0\\ 0 & 1 & 1 & 1\\ 1 & 1 & 0 & 1 \end{pmatrix} \nonumber \]

In this (7,4) code, 24 = 16 of the 27 = 128 possible blocks at the channel decoder correspond to error-free transmission and reception.

Error correction amounts to searching for the codeword c closest to the received block \[\hat{c} \nonumber \] in terms of the Hamming distance between the two. The error correction capability of a channel code is limited by how close together any two error-free blocks are. Bad codes would produce blocks close together, which would result in ambiguity when assigning a block of data bits to a received block. The quantity to examine, therefore, in designing code error correction codes is the minimum distance between codewords.

\[\forall c_{i}\neq c_{j}:(d_{min}=min(d(c_{i},c_{j}))) \nonumber \]

To have a channel code that can correct all single-bit errors,

\[d_{min}\geq 3 \nonumber \]

Suppose we want a channel code to have an error-correction capability of n bits. What must the minimum Hamming distance between codewords dmin be?

Solution

\[d_{min}=2n+1 \nonumber \]

How do we calculate the minimum distance between codewords? Because we have 2K codewords, the number of possible unique pairs equals \[2^{K-1}(2^{K}-1) \nonumber \] which can be a large number. Recall that our channel coding procedure is linear, with c=Gb. Therefore

\[c_{i}\oplus c_{j}=G(b_{i}\oplus b_{j}) \nonumber \]

Because \[b_{i}\oplus b_{j} \nonumber \] always yields another block of data bits, we find that the difference between any two codewords is another codeword! Thus, to find dmin we need only compute the number of ones that comprise all non-zero codewords. Finding these codewords is easy once we examine the coder's generator matrix. Note that the columns of G are codewords (why is this?), and that all codewords can be found by all possible pairwise sums of the columns. To find dmin, we need only count the number of bits in each column and sums of columns. For our example (7, 4), G's first column has three ones, the next one four, and the last two three. Considering sums of column pairs next, note that because the upper portion of G is an identity matrix, the corresponding upper portion of all column sums must have exactly two bits. Because the bottom portion of each column differs from the other columns in at least one place, the bottom portion of a sum of columns must have at least one bit. Triple sums will have at least three bits because the upper portion of G is an identity matrix. Thus, no sum of columns has fewer than three bits, which means that dmin = 3, and we have a channel coder that can correct all occurrences of one error within a received 7-bit block.