8.11: Approximate Numerical Solutions Based on the Convolution Sum

- Page ID

- 8046

In Section 6.5, we developed a recurrence formula for the approximate numerical solution of an LTI 1st order ODE with any IC and any physically plausible input function \(u(t)\). It is possible to generalize the recurrence-formula approach to 2nd and higher order LTI ODEs, but it would require development of theory and detail that are beyond the objectives of this book (see, for examples: Craig, 1982, Section 7.1; and Meirovitch, 2001, Section 4.10). There is, however, an alternative, relatively simple approach based upon the convolution integral, and this approach is readily applicable to calculating approximate numerical solutions for forced response of 1st and 2nd order LTI systems.

To describe this approach, we begin with the general convolution (Duhamel) integral for forced response, which is valid for any LTI system:

\[\left.x(t)\right|_{I C s=0}=\int_{\tau=0}^{\tau=t} u(\tau) h(t-\tau) d \tau\label{eqn:8.41} \]

If initial conditions (ICs) are not zero, then appropriate IC-response solutions must be added to the convolution integral, which represents only the forced response. Examples of such IC-response solutions are given explicitly in Eqs. (6-5), (7-9), and, later, (9-20). Hereafter in this section, we will consider only the forced response, so it will be convenient to omit the “\(IC\)s = 0” subscript from the output variable and denote it simply as \(x(t)\), and later as \(x\) with subscripts, \(x_5\) for example.

We seek approximate evaluations of Equation \(\ref{eqn:8.41}\) in time-series form, that is, at discrete, equally spaced instants in time. Accordingly, just as in Section 6.5, we define the following notation that employs descriptive subscripts, with the initial instant defined as time zero:

| \(t=\) | \(t_1=0\) | \(t_2=t_1 + \Delta t = \Delta t\) | \(t_3=t_2 + \Delta t = 2 \Delta t\) | \(\dots\) | \(\begin{array}{c} t_{n}=t_{n-1}+\Delta t \\ =(n-1) \Delta t \end{array}\) |

\(\dots\) |

| \(x(t)=\) | \(x_{1}\) | \(x_{2}\) | \(x_{3}\) | \(\dots\) | \(x_n\) | \(\dots\) |

| \(u(t)=\) | \(u_{1}\) | \(u_{2}\) | \(u_{3}\) | \(\dots\) | \(u_n\) | \(\dots\) |

| \(h(t)=\) | \(h_{1}\) | \(h_{2}\) | \(h_{3}\) | \(\dots\) | \(h_n\) | \(\dots\) |

Let us designate as a sequence of length \(N\) any series of \(N\) numbers such as \(t_{1}, t_{2}, \ldots, t_{N}\), or \(x_{1}, x_{2}, \ldots, x_{N}\) and let us denote the entire sequence as \(\{t\}_{N}\), or \(\{x\}_{N}\). If time \(t\) is the independent variable, and quantify \(f(t)\) is any time-dependent function, let us denote the values of \(f(t)\) at times \(t_{1}, t_{2}, \ldots, t_{N}\) as \(\{f(t)\}_{N}\).

Forced-response solution Equation \(\ref{eqn:8.41}\) is exact. But now we introduce what, in general, is an approximation. We assume that the integrand product \(u(\tau) h(t-\tau)\) varies so little over the integration time step \(\Delta t\) that it introduces only small error to approximate \(u(\tau) h(t-\tau)\) as being constant over \(\Delta t\), with its value remaining that at the beginning of the time step:

\[u(\tau) h(t-\tau) \approx u\left(t_{k-1}\right) h\left(t-t_{k-1}\right) \text { for } t_{k-1} \leq \tau<t_{k}\label{eqn:8.42} \]

In Equation \(\ref{eqn:8.42}\), \(k\) is an index that varies, just as \(\tau\) varies over the integration limits in Equation \(\ref{eqn:8.41}\).

Suppose that we have matching sequences of time \(\{t\}_{N}\), input \(\{u(t)\}_{N}\), and IRF \(\{h(t)\}_{N}\), and we wish to calculate the corresponding forced-response output sequence, \(\{x(t)\}_{N}\). Then, using approximation Equation \(\ref{eqn:8.42}\), we express Equation \(\ref{eqn:8.41}\) as follows, with the convolution integral approximated as a summation:

\[\left.\begin{array}{l}

x\left(t_{1}\right)=0 \\

x\left(t_{n}\right)=\sum_{k=1}^{n-1} u\left(t_{k}\right) h\left(t_{n}-t_{k}\right) \Delta t, \text { for } 2 \leq n \leq N

\end{array}\right\}\label{eqn:8.43} \]

Additional explanation of Equation \(\ref{eqn:8.43}\) might be helpful, because the extrapolation of Equation \(\ref{eqn:8.41}\) from continuous time to discrete time is not completely obvious. Physically, the forced response must be zero at the instant \(t=t_{1}=0\) when forcing is begun, even if the initial input value \(u(0)\) is nonzero; also, the exact convolution integral Equation \(\ref{eqn:8.41}\) gives the same result for the upper limit \(\tau=t_{1}=0\); hence, \(x\left(t_{1}\right)=0\). Regarding summation only over \(n − 1\) values of product \(u\left(t_{k}\right) h\left(t_{n}-t_{k}\right) \Delta t\) in order to obtain \(x\left(t_{n}\right)\), consider again approximation Equation \(\ref{eqn:8.42}\). Equation \(\ref{eqn:8.42}\) means that the very last term in the summation of Equation \(\ref{eqn:8.43}\) that approximates the integral for \(x\left(t_{n}\right)\) is the product \(u\left(t_{n-1}\right) h\left(t_{n}-t_{n-1}\right) \Delta t\).

Equation \(\ref{eqn:8.43}\) can be written in the following simpler form, using subscript notation and the constancy of time step \(\Delta t\):

\[\left.\begin{array}{l}

x_{1}=0 \\

x_{n}=\Delta t \sum_{k=1}^{n-1} u_{k} h_{n-k}, \text { for } 2 \leq n \leq N

\end{array}\right\}\label{eqn:8.44} \]

The summation in Equation \(\ref{eqn:8.44}\) is called a convolution sum.

It is instructive to write out explicitly a few terms of Equation \(\ref{eqn:8.44}\), and then to study a graphical interpretation of the process. Suppose that we have sequences of input values \(\{u(t)\}_{N}\) and IRF values \(\{h(t)\}_{N}\), with length \(N \geq 4\), and we wish to find the corresponding sequence of responses \(\{x(t)\}_{N}\). Then the first five terms in the response sequence, from Equation \(\ref{eqn:8.44}\), are:

\[x_{2}=\Delta t\left(u_{1} h_{1}\right)\label{eqn:8.45b} \]

\[x_{3}=\Delta t\left(u_{1} h_{2}+u_{2} h_{1}\right)\label{eqn:8.45c} \]

\[x_{4}=\Delta t\left(u_{1} h_{3}+u_{2} h_{2}+u_{3} h_{1}\right)\label{eqn:8.45d} \]

\[x_{5}=\Delta t\left(u_{1} h_{4}+u_{2} h_{3}+u_{3} h_{2}+u_{4} h_{1}\right)\label{eqn:8.45e} \]

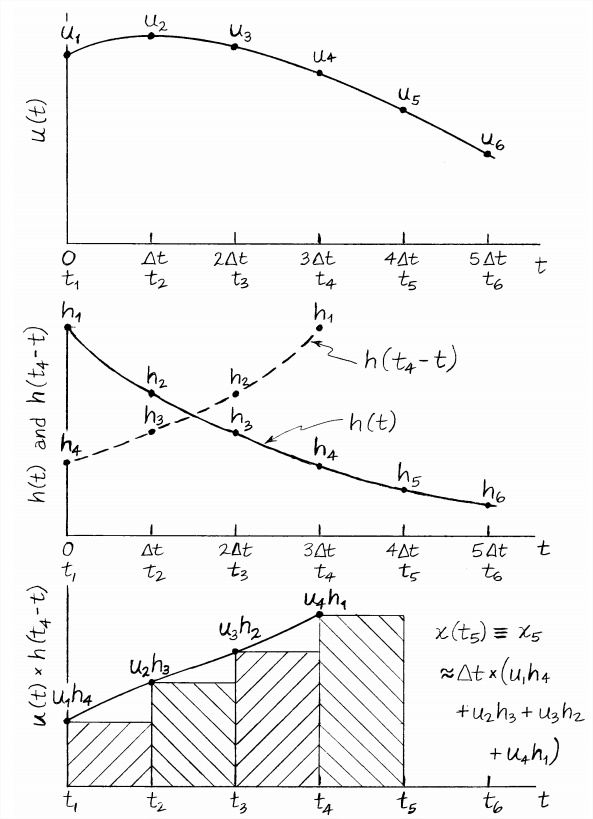

Figure \(\PageIndex{1}\) on the next page is a graphical representation of many aspects of the previous discussion. The figure consists of three graphs of conceptual time-dependent functions, with the three time axes aligned. The top graph is a typical input function, \(u(t)\); and one plot on the middle graph is a unit-impulse response function (IRF), \(h(t)\), which was chosen to resemble the exponential IRF of a 1st order system, with, in particular, \(h(0) \neq 0\). The second (dashed) plot on the middle graph is the function \(h\left(t_{4}-t\right)\), and it was chosen to illustrate Equation \(\ref{eqn:8.45e}\) for output quantity \(x_5\).

Observe on the middle graph of Figure \(\PageIndex{1}\) that \(h\left(t_{4}-t\right)\) is derived from \(h(t)\) by “folding” \(h(t)\) about a vertical line through time \(t_{4} / 2\) and then setting to zero the folded function for all times \(t<0\). One of the dictionary definitions for the word “convolution” as a noun is “a fold”, and this is apparently the reason for the use of “convolution” as the name of a transform, integral, or sum (Evans, 1954, pp. 199-204). It seems that “convolution sum”, for example, sounds more elegant in English than “folding sum”; however, the German noun for mathematical convolution is Faltung, meaning “folding” or “bending”, and Faltung was often used interchangeably with “convolution” in older English-language textbooks and other technical literature.

The plot on the bottom graph of Figure \(\PageIndex{1}\) is the product \(u(t) h\left(t_{4}-t\right)\). At the times \(t_{1}\), \(t_{2}\), \(t_{3}\), and \(t_{4}\), the discrete products \(u_{k} h_{5-k}\) are marked, and approximation Equation \(\ref{eqn:8.42}\) of piecewise constancy is illustrated by straight horizontal lines, each representing the time step \(\Delta t\). This is a stairstep approximation similar to that used in Section 6.5. The bottom graph shows that Equation \(\ref{eqn:8.45e}\) for \(x_5\) is the area under all the cross-hatched rectangles (the entire “staircase”); that area is an approximation of the integral from Equation \(\ref{eqn:8.41}\), \(\int_{\tau=0}^{\tau=t_{5}} u(\tau) h\left(t_{5}-\tau\right) d \tau\). It is obvious from this graphical interpretation of the convolution sum that smaller values of time step \(\Delta t\) will produce more accurate numerical integrations, at the expense of longer calculations.

For the conceptual example of Figure \(\PageIndex{1}\), which resembles a 1st order system that has initial IRF value \(h(0)=h_{1} \neq 0\), and also initial input value \(u(0)=u_{1} \neq 0\), Equation \(\ref{eqn:8.45b}\) gives \(x_{2}=\Delta t\left(u_{1} h_{1}\right) \neq 0\). This is noted because it is easy to make the mistake of calculating approximate time response by applying directly the MATLAB function conv, as in the command line X = conv(u,h), and then by taking the first \(N\) elements of the resulting sequence \(\{X\}_{2 N-1}\) to be the desired forced response, \(\{x(t)\}_{N}\); this process executes the incorrect equation

\[x\left(t_{n}\right)=\sum_{k=1}^{n} u\left(t_{k}\right) h\left(t_{n+1}-t_{k}\right) \Delta t=x_{n}=\Delta t \sum_{k=1}^{n} u_{k} h_{n+1-k} \nonumber \]

for \(1 \leq n \leq N\).

This incorrect equation calculates a sequence with \(N-1\) elements the same as those of the correct forced-response sequence, but the incorrect sequence is shifted backward in time by a single time step, \(\Delta t\). In particular, the incorrect process sets \(x_{1}=\Delta t\left(u_{1} h_{1}\right)\), which would be nonzero for the conceptual problem of Figure \(\PageIndex{1}\), and might alert the analyst that something is wrong. (Recall from the discussion above that the initial forced-response value \(x_1\) must be zero.) But for most systems other than standard 1st order systems, the initial value of the IRF is zero, \(h_{1}=0\), so the error in \(x_{1}=\Delta t\left(u_{1} h_{1}\right)\) would not be obvious in the calculated results. The lessons from this discussion are:

- use of the incorrect convolution sum might produce results that are not obviously incorrect; in fact, for very small \(\Delta t\), the incorrect and correct plots of approximate response versus time might be almost indistinguishable visually; and

- if you apply directly MATLAB’s function

conv, then, in order to obtain the correct approximate forcedresponse sequence, be sure to shift the sequence calculated byconvforward in time by a single time step, \(\Delta t\), and to set \(x_{1}=0\).

The following are two numerical examples of the application of Equation \(\ref{eqn:8.44}\), first for a 1st order ODE, next for an undamped 2nd order ODE.

Convolution-sum Example \(\PageIndex{1}\): 1st order systems

Consider the standard 1st order LTI-ODE of a stable system, Equation 3.4.8, for dependent variable \(x(t)\): \(\dot{x}+\left(1 / \tau_{1}\right) x=b u(t)\). From Section 8.5 and homework Problem 8.5.1, the unit-impulse-response function (IRF) is \(h(t)=b e^{-t / \tau_{1}}\). Letus set the input function to be a declining ramp, \(u(t)= c(a-t)\), in which \(c\), a dimensional constant, is the downward slope of the ramp, and \(a\) is the time at which the input passes through zero. For reference, the exact solution for this problem is derived from Equations 6.2.4 or \(\ref{eqn:8.41}\)1:

\[x(t)=b e^{-t / \tau_{1}} \int_{\tau=0}^{\tau=t} e^{\tau / \tau_{1}} u(\tau) d \tau=b e^{-t / \tau_{1}} \int_{\tau=0}^{\tau=t} e^{\tau / \tau_{1}} c(a-\tau) d \tau \nonumber \]

\[=b c \tau_{1}\left[\left(a+\tau_{1}\right)\left(1-e^{-t / \tau_{1}}\right)-t\right] \nonumber \]

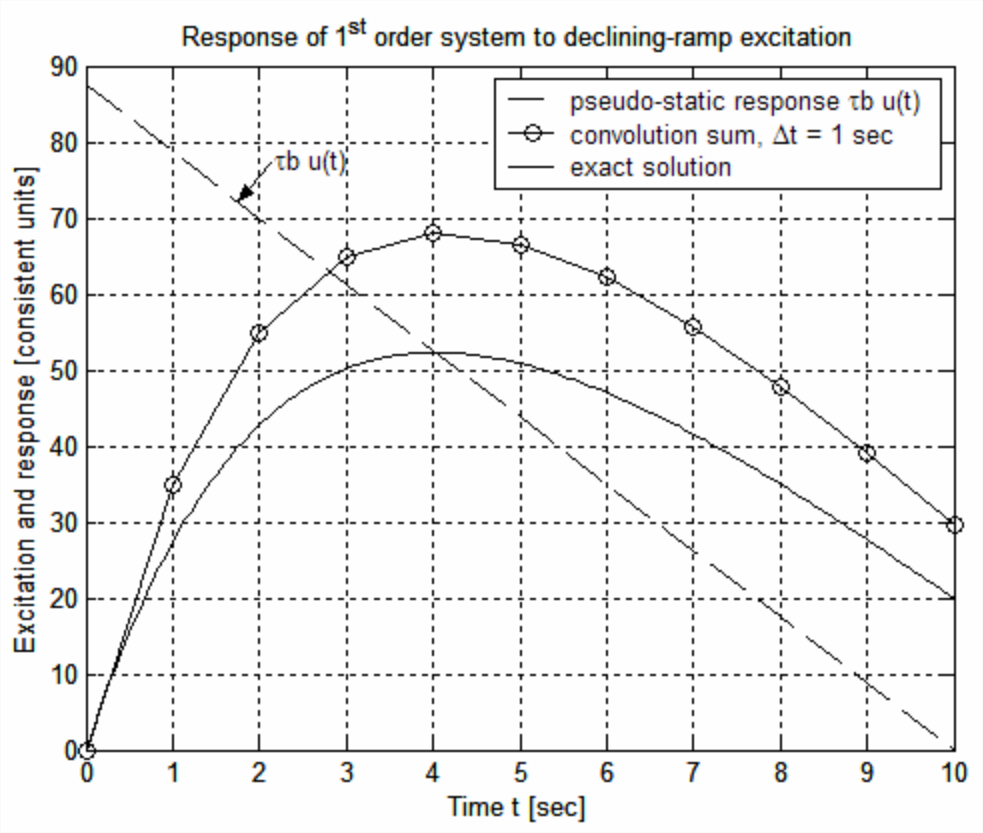

With parameters \(\tau_{1}\) = 2.5 s, \(a\) = 10 s, \(b\) = 3.5, and \(c\) = 1 (b and c in consistent units), the MATLAB commands to calculate and plot the exact solution \(x(t)\), and the approximate solution from Equation \(\ref{eqn:8.44}\), for the relatively large time step \(\Delta t\) = 1 s, are given next, followed by the resulting graph, which was edited later to add labels, title, and legend. Observe in the MATLAB code that the declining-ramp is entered into the input sequence \(\{u(t)\}_{N}\), as is the IRF sequence \(\{h(t)\}_{N}\). Note that the simple code to calculate the convolution sums over the entire forced-response sequence, ∑\(\frac{x_{n}}{\Delta t}=\sum_{k=1}^{n-1} u_{k} h_{n-k}\) for \(2 \leq n \leq N\), consists of a for loop over index \(k\) nested within a for loop over index \(n\).

>> a=10;b=3.5;c=1;tau=2.5;

dt=1;t=0:dt:10;

N=length(t); %sequence length

u=c*(a*ones(1,N)-t); %declining-ramp excitation

plot(t,tau*b*u,'k--'),grid %pseudo-static response

h=b*exp(-t/tau); %IRF, impulse-response function

xc(1)=0; %zero initial value

for n=2:N

sum=0;

for k=1:(n-1)

sum=sum+u(k)*h(n-k);

end

xc(n)=sum;

end

xc=dt*xc;

hold,plot(t,xc,'ko-')

te=0:0.05:10;

xe=c*b*tau*((a+tau)*(1-exp(-te/tau))-te); %exact solution

plot(te,xe,'k')

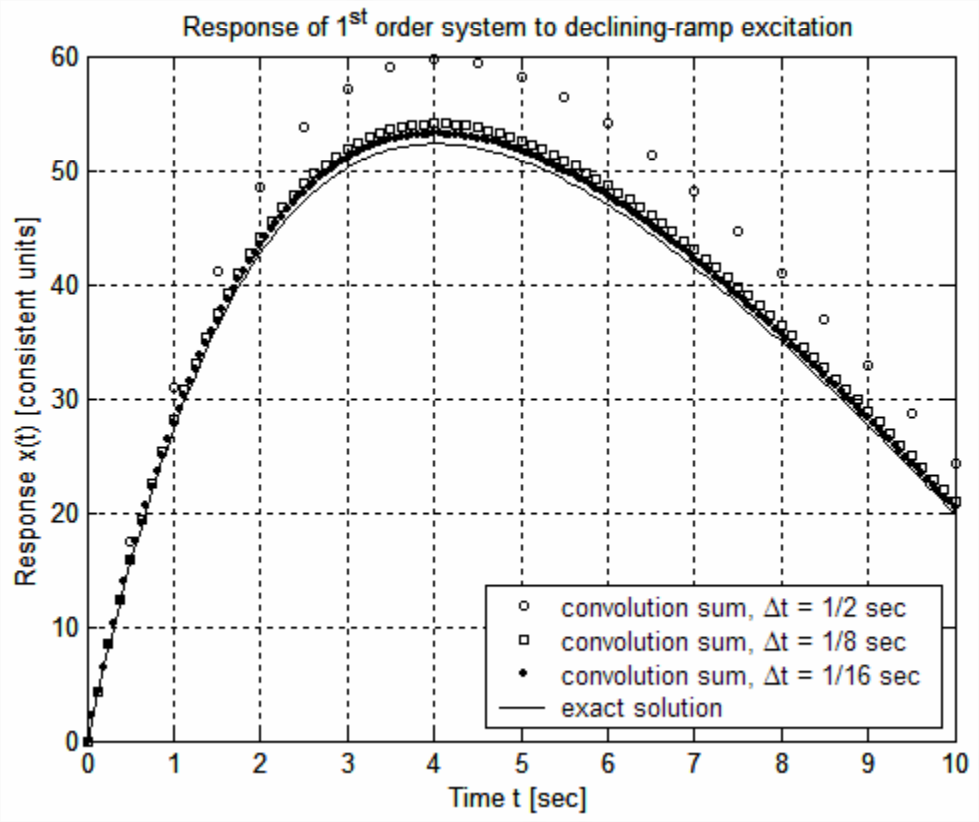

The graph above shows that Equation \(\ref{eqn:8.44}\) with the relatively large time step \(\Delta t=1\) s produces a poor approximation to the exact solution of the 1st order ODE in this problem. The following MATLAB command lines evaluate Equation \(\ref{eqn:8.44}\) and plot the results for three progressively smaller values of \(\Delta t\).

>> a=10;b=3.5;c=1;tau=2.5;

tend=10;

m=[2 8 16]; %inverses of time steps for convolution

maxln=tend*max(m)+1;

t=zeros(3,maxln);xc=zeros(3,maxln); %initialize oversized arrays

for j=1:3

dt=1/m(j);tj=0:dt:tend;N(j)=length(tj);t(j,1:N(j))=tj;

u=c*(a*ones(1,N(j))- tj); %excitation

h=b*exp(-tj/tau); %IRF

xc(j,1)=0; %zero initial value

for n=2:N(j)

sum=0;

for k=1:(n-1)

sum=sum+u(k)*h(n-k);

end

xc(j,n)=dt*sum;

end

end

plot(t(1,1:N(1)),xc(1,1:N(1)),'ko',t(2,1:N(2)),xc(2,1:N(2)),'ks')

hold,plot(t(3,1:N(3)),xc(3,1:N(3)),'k.')

te=0:0.05:tend;

xe=c*b*tau*((a+tau)*(1-exp(-te/tau))-te); %exact solution

plot(te,xe,'k'),grid

The results above demonstrate for this 1st order system that the approximate solution calculated from the convolution sum approaches the exact solution as \(\Delta t \rightarrow 0\). This concludes Convolution-sum Example 1.

Convolution-sum Example \(\PageIndex{2}\): undamped 2nd order system

Consider the LTI-ODE Equation 7.1.5 for a standard undamped 2nd order system, \(\ddot{x}+\omega_{n}^{2} x=\omega_{n}^{2} u(t)\), with ICs \(x(0)=0\) and \(\dot{x}(0)=0\). From Sections 8.7 and 8.10 [Equations 8.7.3 and 8.10.2], the unit-impulse-response function (IRF) is \(h(t)=\omega_{n} \sin \omega_{n} t\). Let us set the input function to be a declining-ramp pulse,

\[u(t)=\left\{\begin{array}{c}

c(a-t), 0 \leq t<t_{d} \\

0, t_{d}<t

\end{array}\right. \nonumber \]

in which \(c\), a dimensional constant, is the downward slope of the ramp, \(ca\) is the input at \(t = 0\), and \(t_d\) is the pulse duration. For reference, the exact solution equations for this problem are derived next from Equations 7.2.4 and 7.2.5, without details of the integration processes. For the interval during which the pulse is active, \(0 \leq t \leq t_{d}\), we apply Equation 7.2.4, although Equation 7.2.5 would serve just as well:

\[x(t)=\omega_{n} \int_{\tau=0}^{\tau=t} \sin \omega_{n} \tau \times u(t-\tau) d \tau=\omega_{n} \int_{\tau=0}^{\tau=t} \sin \omega_{n} \tau \times c[a-(t-\tau)] d \tau \nonumber \]

\[=c\left[a-t-a \cos \omega_{n} t+\left(1 / \omega_{n}\right) \sin \omega_{n} t\right], \text { valid for } 0 \leq t \leq t_{d} \nonumber \]

For the time after the pulse ceases, \(t_{d}<t\), Equation 7.2.5 is preferable since the input \(u(t)\) is zero for \(t_{d}<t\), so that the upper limit of integration is clearly \(t_{d}\):

\[x(t)=\omega_{n} \int_{\tau=0}^{\tau=t} \sin \omega_{n}(t-\tau) \times u(\tau) d \tau=\omega_{n} \int_{\tau=0}^{\tau=t_{d}} \sin \omega_{n}(t-\tau) \times c(a-\tau) d \tau \nonumber \]

\[=\omega_{n} c\left[\sin \omega_{n} t \int_{\tau=0}^{\tau=t_{d}} \cos \omega_{n} \tau \times(a-\tau) d \tau-\cos \omega_{n} t \int_{\tau=0}^{\tau=t_{d}} \sin \omega_{n} \tau \times(a-\tau) d \tau\right] \nonumber \]

\[\left.\begin{array}{l}

=c\left\{\sin \omega_{n} t\left[a \sin \omega_{n} t_{d}-\left(1 / \omega_{n}\right)\left(\omega_{n} t_{d} \sin \omega_{n} t_{d}+\cos \omega_{n} t_{d}-1\right)\right]\right. \\

.\end{array}\right\}, \text { valid for } t_{d}<t \nonumber \]

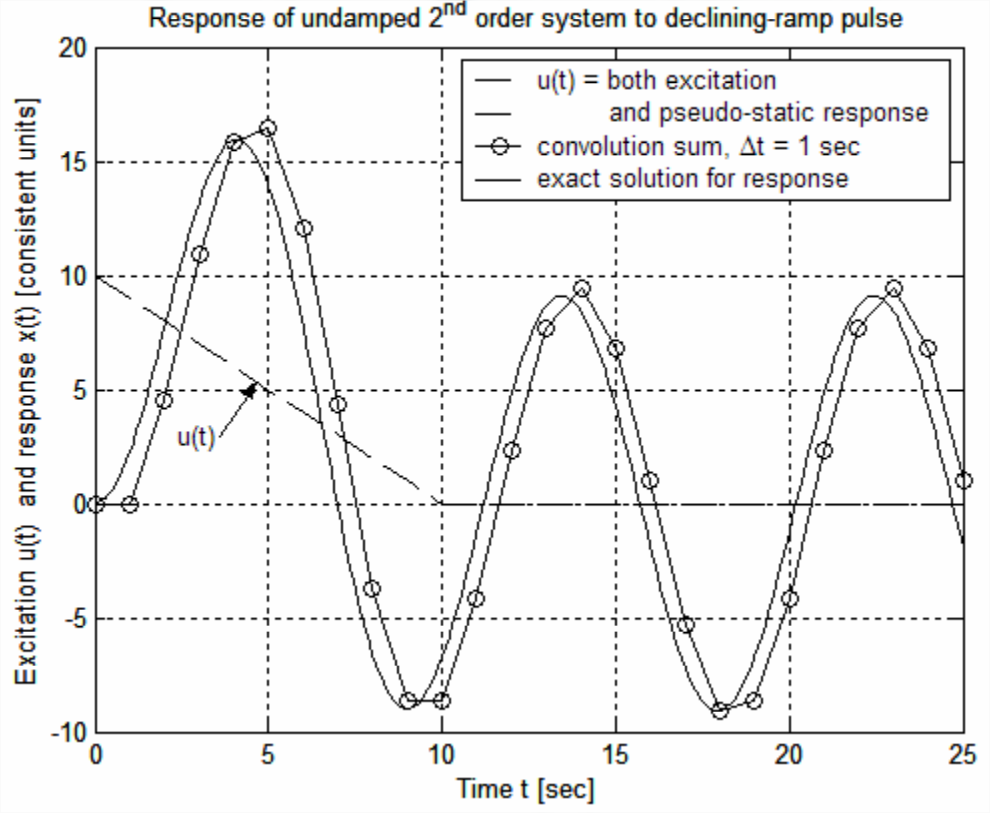

Let the parameters be: undamped natural frequency \(\omega_{n}=\pi / 4.5\) rad/s (making the associated period 9 s), \(2\) = 10 s, \(c\) = 1 (in consistent units), and pulse period \(t_d\) = 10 s2. The MATLAB commands to calculate and plot the exact solution \(x(t)\), and the approximate solution from Equation \(\ref{eqn:8.44}\), for the relatively large time step \(\Delta t\) = 1 s, are given next, followed by the resulting graph of response, which was edited later to add labels, title, and legend. Observe in the MATLAB code that the entire declining-ramp pulse, including zeros, is entered into the input sequence \(\{u(t)\}_{N}\), as is the IRF sequence \(\{h(t)\}_{N}\). Also, just as in Convolution-sum Example 1, the simple code to calculate the convolution sums over the entire forced-response sequence, \(\frac{x_{n}}{\Delta t}=\sum_{k=1}^{n-1} u_{k} h_{n-k}\) for \(2 \leq n \leq N\), consists of a for loop over index \(k\) nested within a for loop over index \(n\).

>> a=10;c=1;wn=pi/4.5;td=10;

tend=25; %time range (sec)

dt=1;t=0:dt:tend;

nd=td/dt+1; %warning: not necessarily an integer for arbitrary td

N=length(t); %sequence length

u=zeros(1,N);

u(1:nd)=c*(a*ones(1,nd)-t(1:nd)); %declining-ramp pulse

plot(t(1:nd),u(1:nd),'k--'),grid %pseudo-static response

hold,plot(t(nd:N),zeros(1,(N-nd+1)),'k--')

h=wn*sin(wn*t); %IRF, impulse-response function

xc(1)=0; %zero initial value

for n=2:N

sum=0;

for k=1:(n-1)

sum=sum+u(k)*h(n-k);

end

xc(n)=sum;

end

xc=dt*xc;

plot(t,xc,'ko-')

t1=0:0.1:td;t2=(td+0.1):0.1:tend;te=[t1 t2];

wt1=wn*t1;wt2=wn*t2;

x1=c*(a-t1-a*cos(wt1)+sin(wt1)/wn);

arg=wn*td;sn=sin(arg);cn=cos(arg);

S=a*sn-(arg*sn+cn-1)/wn;C=a*(1-cn)-(-arg*cn+sn)/wn;

x2=c*(S*sin(wt2)-C*cos(wt2));

xe=[x1 x2]; %exact solution

plot(te,xe,'k')

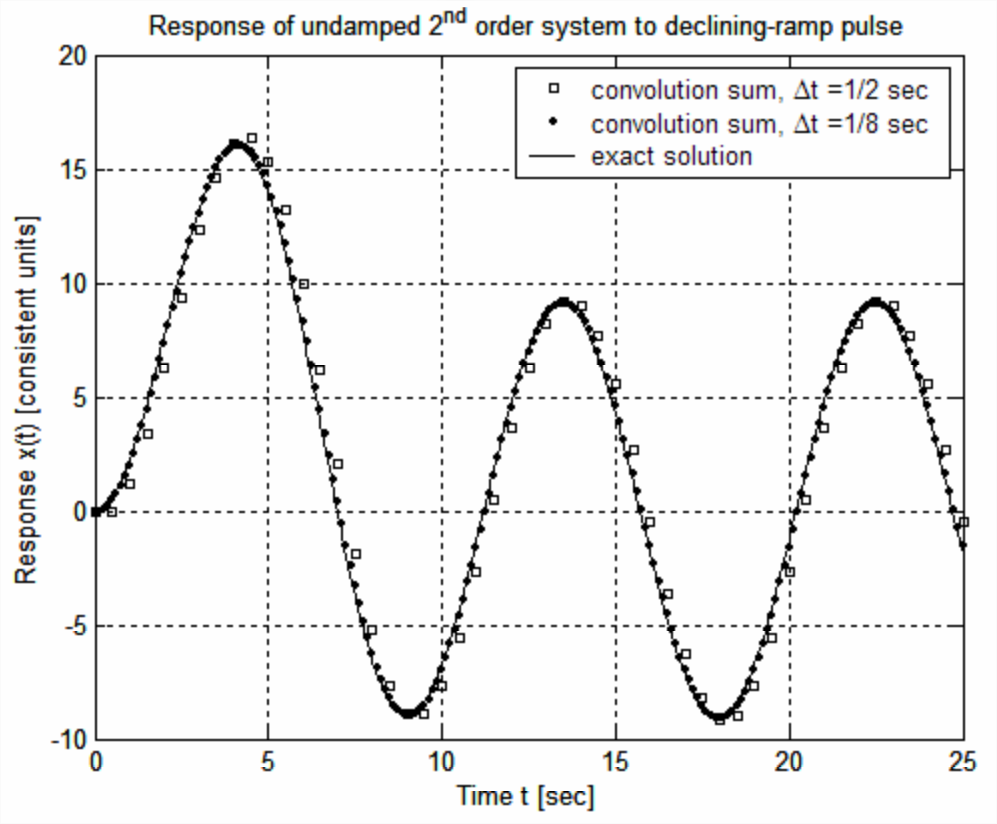

The graph above shows that Equation \(\ref{eqn:8.44}\) with the relatively large time step \(\Delta t\) = 1 s produces only a mediocre approximation to the exact solution of the 2nd order ODE in this problem. But the plot of the convolution-sum solution also illustrates an important point: not only is \(x_1\) = 0, the requirement discussed previously, but also \(x_2\) = \(u_1h_1\) = 0 because the initial IRF value \(h_1\) = 0 for the 2nd order ODE, regardless of the initial value \(u_1\) of the input. The appropriate approximation in this case for the initial time derivative of response is \(\frac{d x}{d t}(t=0) \approx \frac{x_{2}-x_{1}}{\Delta t}=0\). The necessary initial conditions (ICs) for a 2nd order ODE are both \(x(0)\) and \(\dot{x}(0)\). As is stated at the beginning of this section, any nonzero ICs must be accounted for by separate IC-response solutions, as these ICs cannot be included in the forced-response solution, which is the convolution sum. This means that we must have \(x_{1}=x_{2}=0\) for this, or, in fact, any correct convolution-sum approximate solution of a standard 2nd order ODE. The plot above of the convolution-sum solution also demonstrates a negative (relative to numerical accuracy) consequence of the necessary condition \(x_{2}=0\): a consistent time lag, of the approximate solution relative to the exact solution, on the order of \(\Delta t\). The following MATLAB command lines evaluate Equation \(\ref{eqn:8.44}\) and plot the results (on the next page) for two progressively smaller values of \(\Delta t\).

>> a=10;c=1;wn=pi/4.5;td=10;

tend=25; %time range (sec)

m=[2 8]; %inverses of time steps for convolution

maxln=tend*max(m)+1;

t=zeros(2,maxln);xc=zeros(2,maxln); %initialize oversized arrays

for j=1:2

dt=1/m(j);tj=0:dt:tend;N(j)=length(tj);t(j,1:N(j))=tj;

nd=td/dt+1; %warning: not an integer for arbitrary td

u=zeros(1,N(j));

u(1:nd)=c*(a*ones(1,nd)-tj(1:nd)); %declining-ramp pulse

h=wn*sin(wn*tj); %IRF, impulse-response function

xc(j,1)=0; %zero initial value

for n=2:N(j)

sum=0;

for k=1:(n-1)

sum=sum+u(k)*h(n-k);

end

xc(j,n)=dt*sum;

end

end

plot(t(1,1:N(1)),xc(1,1:N(1)),'ks')

hold,plot(t(2,1:N(2)),xc(2,1:N(2)),'k.'),grid

t1=0:0.1:td;t2=(td+0.1):0.1:tend;te=[t1 t2];

wt1=wn*t1;wt2=wn*t2;

x1=c*(a-t1-a*cos(wt1)+sin(wt1)/wn);

arg=wn*td;sn=sin(arg);cn=cos(arg);

S=a*sn-(arg*sn+cn-1)/wn;C=a*(1-cn)-(-arg*cn+sn)/wn;

x2=c*(S*sin(wt2)-C*cos(wt2));

xe=[x1 x2]; %exact solution

plot(te,xe,'k')

The numerical solution for time step \(\Delta t=1 / 2\) s is a fair approximate solution, but still with a noticeable time lag, relative to the exact solution, on the order of \(\Delta t\). However, the numerical solution for time step \(\Delta t=1 / 8\) s is almost indistinguishable from the exact solution by eye on the graph. This concludes Convolution-sum Example 2.

Finally, we note a curious intersection of two seemingly unrelated mathematical operations, convolution sum and multiplication of polynomials. Suppose that we have two arbitrary sequences of numbers, \(\{u\}_{N}\) and \(\{h\}_{M}\). Consider the convolution sum in the form applied by the MATLAB command line X = conv(u,h), which produces the sequence \(X_{n}=\sum_{k=1}^{n} u_{k} h_{n+1-k}\) for \(1 \leq n \leq(N+M-1): \quad X_{1}=u_{1} h_{1}, \quad X_{2}=u_{1} h_{2}+u_{2} h_{1}, \quad X_{3}=u_{1} h_{3}+u_{2} h_{2}+u_{3} h_{1}, \quad X_{4}=u_{1} h_{4}+u_{2} h_{3}+u_{3} h_{2}+u_{4} h_{1}\), etc. Evans (1954, pp. 201-203) observed that the convolution-sum process can be executed with use of tables in a “process similar to multiplication.” First, we define the table of sequences \(\{h\}_{M}\) and \(\{u\}_{N}\):

| \(\{h\}_{M}=\) | \(h_1\) | \(h_2\) | \(h_3\) | \(h_4\) | \(\dots\) |

| \(\{u\}_{N}=\) | \(u_1\) | \(u_2\) | \(u_3\) | \(u_4\) | \(\dots\) |

In order to execute the operation \(X_{n}=\sum_{k=1}^{n} u_{k} h_{n+1-k}\) using the sequences \(\{h\}_{M}\) and \(\{u\}_{N}\), we construct the table of products below. The first row of the table consists of the products \(u_{1} \times\{h\}_{M}\), the second row consists of the products \(u_{2} \times\{h\}_{M}\) and this sequence is offset by one column toward the right, the third row consists of the products \(u_{3} \times\{h\}_{M}\) and this sequence is offset by two columns toward the right, etc.

| \(n=1\) | \(n=2\) | \(n=3\) | \(n=4\) | \(\dots\) | |

| \(k=1\) | \(u_1h_1\) | \(u_1h_2\) | \(u_1h_3\) | \(u_1h_4\) | \(\dots\) |

| \(k=2\) | \(u_2h_1\) | \(u_2h_2\) | \(u_2h_3\) | \(\dots\) | |

| \(k=3\) | \(u_3h_1\) | \(u_3h_2\) | \(\dots\) | ||

| \(k=4\) | \(u_4h_1\) | \(\dots\) | |||

| \(\dots\) | \(\dots\) |

Then each element \(X_{n}\) of the sequence \(\{X\}_{N+M-1}\) is the sum of the products in the corresponding \(n\)th column of the table of products above:

| \(\{X\}_{N+M-1}=\) | \(X_{1}=u_{1} h_{1}\) | \(\begin{array}{l} X_{2}=u_{1} h_{2}+ \\ u_{2} h_{1} \end{array}\) |

\(\begin{array}{l} X_{3}=u_{1} h_{3}+ \\ u_{2} h_{2}+u_{3} h_{1} \end{array}\) |

\(\begin{array}{l} X_{4}=u_{1} h_{4}+ \\ u_{2} h_{3}+u_{3} h_{2}+ \\ u_{4} h_{1} \end{array}\) |

\(dots\) |

A numerical example applying MATLAB’s conv function to multiplication of two polynomials was given in Section 6.1. Here is another example of the process using the notation in the tables above, the product of a 1st degree polynomial, \(P_{1}\), with a 2nd degree polynomial, \(P_{2}\):

\[P_{1} \times P_{2}=\left(u_{1} s+u_{2}\right) \times\left(h_{1} s^{2}+h_{2} s+h_{3}\right)=u_{1} h_{1} s^{3}+\left(u_{1} h_{2}+u_{2} h_{1}\right) s^{2}+\left(u_{1} h_{3}+u_{2} h_{2}\right) s+u_{2} h_{3} \nonumber \]

With zero input polynomial coefficients \(u_{i}=0\) for \(i > 2\) and \(h_j = 0\) for \(j > 3\), the coefficients of the product cubic polynomial (in descending order of powers of \(s\)) are the coefficients \(X_1\), \(X_2\), \(X_3\), and \(X_4\) from the last table above.

If you wished to execute product \(P_{1} \times P_{2}\), you would probably use the basic algebra below for multiplication of two polynomials, which, although less general, is essentially the same as the tabular process described above.

| Polynomial \(P_2\) = | \(h_{1} s^{2}+\) | \(h_{2} s+\) | \(h_{3}\) | |

| Polynomial \(P_{1}\) = | \(u_{1} s+\) | \(u_{2}\) | ||

| \(u_{1} s \times P_{2}\) = | \(u_{1} h_{1} s^{3}+\) | \(u_{1} h_{2} s^{2}+\) | \(u_{1} h_{3} s\) | |

| \(u_{2} \times P_{2}\) (offset) = | \(u_{2} h_{1} s^{2}+\) | \(u_{2} h_{2} s+\) | \(u_{2} h_{3}\) | |

| \(P_{1} \times P_{2}\) = column sums = | \(u_{1} h_{1} s^{3}+\) | \(\left(u_{1} h_{2}+u_{2} h_{1}\right) s^{2}+\) | \(\left(u_{1} h_{3}+u_{1} h_{3}\right) s+\) | \(u_{2} h_{3}\) |

1Details of the integration process are not shown. Note, however, that you can easily verify the validity of the given solution by substituting it into the ODE and IC and finding that they are satisfied.

2With \(t_d = a\), the pulse is continuous at zero when it ends.