5.2: Introduction to Computer Organization

- Page ID

- 1621

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\( \newcommand{\dsum}{\displaystyle\sum\limits} \)

\( \newcommand{\dint}{\displaystyle\int\limits} \)

\( \newcommand{\dlim}{\displaystyle\lim\limits} \)

\( \newcommand{\id}{\mathrm{id}}\) \( \newcommand{\Span}{\mathrm{span}}\)

( \newcommand{\kernel}{\mathrm{null}\,}\) \( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\) \( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\) \( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\id}{\mathrm{id}}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\kernel}{\mathrm{null}\,}\)

\( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\)

\( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\)

\( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\) \( \newcommand{\AA}{\unicode[.8,0]{x212B}}\)

\( \newcommand{\vectorA}[1]{\vec{#1}} % arrow\)

\( \newcommand{\vectorAt}[1]{\vec{\text{#1}}} % arrow\)

\( \newcommand{\vectorB}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vectorC}[1]{\textbf{#1}} \)

\( \newcommand{\vectorD}[1]{\overrightarrow{#1}} \)

\( \newcommand{\vectorDt}[1]{\overrightarrow{\text{#1}}} \)

\( \newcommand{\vectE}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash{\mathbf {#1}}}} \)

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\(\newcommand{\longvect}{\overrightarrow}\)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\(\newcommand{\avec}{\mathbf a}\) \(\newcommand{\bvec}{\mathbf b}\) \(\newcommand{\cvec}{\mathbf c}\) \(\newcommand{\dvec}{\mathbf d}\) \(\newcommand{\dtil}{\widetilde{\mathbf d}}\) \(\newcommand{\evec}{\mathbf e}\) \(\newcommand{\fvec}{\mathbf f}\) \(\newcommand{\nvec}{\mathbf n}\) \(\newcommand{\pvec}{\mathbf p}\) \(\newcommand{\qvec}{\mathbf q}\) \(\newcommand{\svec}{\mathbf s}\) \(\newcommand{\tvec}{\mathbf t}\) \(\newcommand{\uvec}{\mathbf u}\) \(\newcommand{\vvec}{\mathbf v}\) \(\newcommand{\wvec}{\mathbf w}\) \(\newcommand{\xvec}{\mathbf x}\) \(\newcommand{\yvec}{\mathbf y}\) \(\newcommand{\zvec}{\mathbf z}\) \(\newcommand{\rvec}{\mathbf r}\) \(\newcommand{\mvec}{\mathbf m}\) \(\newcommand{\zerovec}{\mathbf 0}\) \(\newcommand{\onevec}{\mathbf 1}\) \(\newcommand{\real}{\mathbb R}\) \(\newcommand{\twovec}[2]{\left[\begin{array}{r}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\ctwovec}[2]{\left[\begin{array}{c}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\threevec}[3]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\cthreevec}[3]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\fourvec}[4]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\cfourvec}[4]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\fivevec}[5]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\cfivevec}[5]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\mattwo}[4]{\left[\begin{array}{rr}#1 \amp #2 \\ #3 \amp #4 \\ \end{array}\right]}\) \(\newcommand{\laspan}[1]{\text{Span}\{#1\}}\) \(\newcommand{\bcal}{\cal B}\) \(\newcommand{\ccal}{\cal C}\) \(\newcommand{\scal}{\cal S}\) \(\newcommand{\wcal}{\cal W}\) \(\newcommand{\ecal}{\cal E}\) \(\newcommand{\coords}[2]{\left\{#1\right\}_{#2}}\) \(\newcommand{\gray}[1]{\color{gray}{#1}}\) \(\newcommand{\lgray}[1]{\color{lightgray}{#1}}\) \(\newcommand{\rank}{\operatorname{rank}}\) \(\newcommand{\row}{\text{Row}}\) \(\newcommand{\col}{\text{Col}}\) \(\renewcommand{\row}{\text{Row}}\) \(\newcommand{\nul}{\text{Nul}}\) \(\newcommand{\var}{\text{Var}}\) \(\newcommand{\corr}{\text{corr}}\) \(\newcommand{\len}[1]{\left|#1\right|}\) \(\newcommand{\bbar}{\overline{\bvec}}\) \(\newcommand{\bhat}{\widehat{\bvec}}\) \(\newcommand{\bperp}{\bvec^\perp}\) \(\newcommand{\xhat}{\widehat{\xvec}}\) \(\newcommand{\vhat}{\widehat{\vvec}}\) \(\newcommand{\uhat}{\widehat{\uvec}}\) \(\newcommand{\what}{\widehat{\wvec}}\) \(\newcommand{\Sighat}{\widehat{\Sigma}}\) \(\newcommand{\lt}{<}\) \(\newcommand{\gt}{>}\) \(\newcommand{\amp}{&}\) \(\definecolor{fillinmathshade}{gray}{0.9}\)- Explains how digital systems such as the computer represent numbers.

- Covers the basics of boolean algebra and binary math.

Computer Architecture

To understand digital signal processing systems, we must understand a little about how computers compute. The modern definition of a computer is an electronic device that performs calculations on data, presenting the results to humans or other computers in a variety of (hopefully useful) ways.

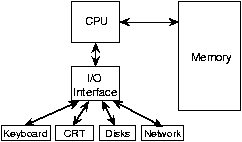

The generic computer contains input devices (keyboard, mouse, A/D (analog-to-digital) converter, etc.), a computational unit, and output devices (monitors, printers, D/A converters). The computational unit is the computer's heart, and usually consists of a central processing unit (CPU), a memory, and an input/output (I/O) interface. What I/O devices might be present on a given computer vary greatly.

- A simple computer operates fundamentally in discrete time. Computers are clocked devices, in which computational steps occur periodically according to ticks of a clock. This description belies clock speed: When you say "I have a 1 GHz computer," you mean that your computer takes 1 nanosecond to perform each step. That is incredibly fast! A "step" does not, unfortunately, necessarily mean a computation like an addition; computers break such computations down into several stages, which means that the clock speed need not express the computational speed. Computational speed is expressed in units of millions of instructions/second (Mips). Your 1 GHz computer (clock speed) may have a computational speed of 200 Mips.

- Computers perform integer (discrete-valued) computations. Computer calculations can be numeric (obeying the laws of arithmetic), logical (obeying the laws of an algebra), or symbolic (obeying any law you like).1 Each computer instruction that performs an elementary numeric calculation --- an addition, a multiplication, or a division --- does so only for integers. The sum or product of two integers is also an integer, but the quotient of two integers is likely to not be an integer. How does a computer deal with numbers that have digits to the right of the decimal point? This problem is addressed by using the so-called floating-point representation of real numbers. At its heart, however, this representation relies on integer-valued computations.

Representing Numbers

Focusing on numbers, all numbers can represented by the positional notation system. 2 The b-ary positional representation system uses the position of digits ranging from 0 to b-1 to denote a number. The quantity b is known as the base of the number system. Mathematically, positional systems represent the positive integer n as:

\[\forall d_{k},d_{k}\in \left \{ 0,...,b-1 \right \}:\left ( n=\sum_{k=0}^{\infty } d_{k}b^{k} \right ) \nonumber \]

and we succinctly express n in base-b as:

\[n_{b}=d_{N}d_{N-1}...d_{0} \nonumber \]

The number 25 in base 10 equals:

\[2\times 10^{1}+5\times 10^{0} \nonumber \]

So that the digits representing this number are:

\[d_{0}=5,\; d_{1}=2\; all\; other\; d_{k}=0 \nonumber \]

This same number in binary (base 2) equals:

\[11001(1\times 2^{4}+1\times 2^{3}+0\times 2^{2}+0\times 2^{1}+1\times 2^{0}) \nonumber \]

and 19 in hexadecimal (base 16). Fractions between zero and one are represented the same way.

\[\forall d_{k},d_{k}\in \left \{ 0,...,b-1 \right \}:\left ( f=\sum_{k=-\infty }^{-1} d_{k}b^{k} \right ) \nonumber \]

All numbers can be represented by their sign, integer and fractional parts. Complex numbers can be thought of as two real numbers that obey special rules to manipulate them.

Humans use base 10, commonly assumed to be due to us having ten fingers. Digital computers use the base 2 or binary number representation, each digit of which is known as a bit (binary digit).

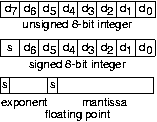

Here, each bit is represented as a voltage that is either "high" or "low," thereby representing "1" or "0," respectively. To represent signed values, we tack on a special bit—the sign bit—to express the sign. The computer's memory consists of an ordered sequence of bytes, a collection of eight bits. A byte can therefore represent an unsigned number ranging from 0 to 255. If we take one of the bits and make it the sign bit, we can make the same byte to represent numbers ranging from -128 to 127. But a computer cannot represent all possible real numbers. The fault is not with the binary number system; rather having only a finite number of bytes is the problem. While a gigabyte of memory may seem to be a lot, it takes an infinite number of bits to represent π. Since we want to store many numbers in a computer's memory, we are restricted to those that have a finite binary representation. Large integers can be represented by an ordered sequence of bytes. Common lengths, usually expressed in terms of the number of bits, are 16, 32, and 64. Thus, an unsigned 32-bit number can represent integers ranging between 0 and 232-1(4,294,967,295), a number almost big enough to enumerate every human in the world!3

For both 32-bit and 64-bit integer representations, what are the largest numbers that can be represented if a sign bit must also be included.

Solution

For b-bit signed integers, the largest number is 2b-1 - 1. For b=32, we have 2,147,483,647 and for b=64, we have 9,223,372,036,854,775,807 or about 9.2×1018 .

While this system represents integers well, how about numbers having nonzero digits to the right of the decimal point? In other words, how are numbers that have fractional parts represented? For such numbers, the binary representation system is used, but with a little more complexity. The floating-point system uses a number of bytes - typically 4 or 8 - to represent the number, but with one byte (sometimes two bytes) reserved to represent the exponent

\[x=m2^{^{e}} \nonumber \]

The mantissa is usually taken to be a binary fraction having a magnitude in the range

\[\left [ \frac{1}{2},1\right ) \nonumber \]

which means that the binary representation is such that

\[d_{-1}=1 \nonumber \]

4 The number zero is an exception to this rule, and it is the only floating point number having a zero fraction. The sign of the mantissa represents the sign of the number and the exponent can be a signed integer.

A computer's representation of integers is either perfect or only approximate, the latter situation occurring when the integer exceeds the range of numbers that a limited set of bytes can represent. Floating point representations have similar representation problems: if the number

What are the largest and smallest numbers that can be represented in 32-bit floating point? in 64-bit floating point that has sixteen bits allocated to the exponent? Note that both exponent and mantissa require a sign bit.

Solution

In floating point, the number of bits in the exponent determines the largest and smallest representable numbers. For 32-bit floating point, the largest (smallest) numbers are

\[2^{\pm (127)}=1.7\times 10^{38}(5.9\times 10^{-39}) \nonumber \]

For 64-bit floating point, the largest number is about

\[10^{9863} \nonumber \]

So long as the integers aren't too large, they can be represented exactly in a computer using the binary positional notation. Electronic circuits that make up the physical computer can add and subtract integers without error. (This statement isn't quite true; when does addition cause problems?)

Computer Arithmetic and Logic

The binary addition and multiplication tables are

\[\begin{pmatrix} 0+0=0\\ 0+1=1\\ 1+1=10\\ 1+0=1\\ \\ 0\times 0=0\\ 0\times 1=0\\ 1\times 1=1\\ 1\times 0=0 \end{pmatrix} \nonumber \]

Note that if carries are ignored,7 subtraction of two single-digit binary numbers yields the same bit as addition. Computers use high and low voltage values to express a bit, and an array of such voltages express numbers akin to positional notation. Logic circuits perform arithmetic operations.

Add twenty-five and seven in base 2. Note the carries that might occur. Why is the result "nice"?

Solution

\[25=110011_{2}\; and\; 7=111_{2} \nonumber \]

We find that

\[110011_{2}+111_{2}=100000_{2}=32 \nonumber \]

The variables of logic indicate truth or falsehood. A∩B, the AND of A and B, represents a statement that both A and B must be true for the statement to be true. You use this kind of statement to tell search engines that you want to restrict hits to cases where both of the events A and B occur. AUB, the OR of A and B, yields a value of truth if either is true. Note that if we represent truth by a "1" and falsehood by a "0," binary multiplication corresponds to AND and addition (ignoring carries) to XOR. XOR, the exclusive or operator, equals the union of AUB and A∩B. The Irish mathematician George Boole discovered this equivalence in the mid-nineteenth century. It laid the foundation for what we now call Boolean algebra, which expresses as equations logical statements. More importantly, any computer using base-2 representations and arithmetic can also easily evaluate logical statements. This fact makes an integer-based computational device much more powerful than might be apparent.

- An example of a symbolic computation is sorting a list of names.

- Alternative number representation systems exist. For example, we could use stick figure counting or Roman numerals. These were useful in ancient times, but very limiting when it comes to arithmetic calculations: ever tried to divide two Roman numerals?

- You need one more bit to do that.

- In some computers, this normalization is taken to an extreme: the leading binary digit is not explicitly expressed, providing an extra bit to represent the mantissa a little more accurately. This convention is known as the hidden-ones notation.

- See if you can find this representation.

- Note that there will always be numbers that have an infinite representation in any chosen positional system. The choice of base defines which do and which don't. If you were thinking that base 10 numbers would solve this inaccuracy, note that 1/3=0.333333... has an infinite representation in decimal (and binary for that matter), but has finite representation in base 3.

- A carry means that a computation performed at a given position affects other positions as well. Here, 1+1=10 is an example of a computation that involves a carry.