1.3: Application - Logic Circuits

- Page ID

- 6710

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\( \newcommand{\id}{\mathrm{id}}\) \( \newcommand{\Span}{\mathrm{span}}\)

( \newcommand{\kernel}{\mathrm{null}\,}\) \( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\) \( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\) \( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\id}{\mathrm{id}}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\kernel}{\mathrm{null}\,}\)

\( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\)

\( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\)

\( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\) \( \newcommand{\AA}{\unicode[.8,0]{x212B}}\)

\( \newcommand{\vectorA}[1]{\vec{#1}} % arrow\)

\( \newcommand{\vectorAt}[1]{\vec{\text{#1}}} % arrow\)

\( \newcommand{\vectorB}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vectorC}[1]{\textbf{#1}} \)

\( \newcommand{\vectorD}[1]{\overrightarrow{#1}} \)

\( \newcommand{\vectorDt}[1]{\overrightarrow{\text{#1}}} \)

\( \newcommand{\vectE}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash{\mathbf {#1}}}} \)

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\(\newcommand{\avec}{\mathbf a}\) \(\newcommand{\bvec}{\mathbf b}\) \(\newcommand{\cvec}{\mathbf c}\) \(\newcommand{\dvec}{\mathbf d}\) \(\newcommand{\dtil}{\widetilde{\mathbf d}}\) \(\newcommand{\evec}{\mathbf e}\) \(\newcommand{\fvec}{\mathbf f}\) \(\newcommand{\nvec}{\mathbf n}\) \(\newcommand{\pvec}{\mathbf p}\) \(\newcommand{\qvec}{\mathbf q}\) \(\newcommand{\svec}{\mathbf s}\) \(\newcommand{\tvec}{\mathbf t}\) \(\newcommand{\uvec}{\mathbf u}\) \(\newcommand{\vvec}{\mathbf v}\) \(\newcommand{\wvec}{\mathbf w}\) \(\newcommand{\xvec}{\mathbf x}\) \(\newcommand{\yvec}{\mathbf y}\) \(\newcommand{\zvec}{\mathbf z}\) \(\newcommand{\rvec}{\mathbf r}\) \(\newcommand{\mvec}{\mathbf m}\) \(\newcommand{\zerovec}{\mathbf 0}\) \(\newcommand{\onevec}{\mathbf 1}\) \(\newcommand{\real}{\mathbb R}\) \(\newcommand{\twovec}[2]{\left[\begin{array}{r}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\ctwovec}[2]{\left[\begin{array}{c}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\threevec}[3]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\cthreevec}[3]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\fourvec}[4]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\cfourvec}[4]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\fivevec}[5]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\cfivevec}[5]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\mattwo}[4]{\left[\begin{array}{rr}#1 \amp #2 \\ #3 \amp #4 \\ \end{array}\right]}\) \(\newcommand{\laspan}[1]{\text{Span}\{#1\}}\) \(\newcommand{\bcal}{\cal B}\) \(\newcommand{\ccal}{\cal C}\) \(\newcommand{\scal}{\cal S}\) \(\newcommand{\wcal}{\cal W}\) \(\newcommand{\ecal}{\cal E}\) \(\newcommand{\coords}[2]{\left\{#1\right\}_{#2}}\) \(\newcommand{\gray}[1]{\color{gray}{#1}}\) \(\newcommand{\lgray}[1]{\color{lightgray}{#1}}\) \(\newcommand{\rank}{\operatorname{rank}}\) \(\newcommand{\row}{\text{Row}}\) \(\newcommand{\col}{\text{Col}}\) \(\renewcommand{\row}{\text{Row}}\) \(\newcommand{\nul}{\text{Nul}}\) \(\newcommand{\var}{\text{Var}}\) \(\newcommand{\corr}{\text{corr}}\) \(\newcommand{\len}[1]{\left|#1\right|}\) \(\newcommand{\bbar}{\overline{\bvec}}\) \(\newcommand{\bhat}{\widehat{\bvec}}\) \(\newcommand{\bperp}{\bvec^\perp}\) \(\newcommand{\xhat}{\widehat{\xvec}}\) \(\newcommand{\vhat}{\widehat{\vvec}}\) \(\newcommand{\uhat}{\widehat{\uvec}}\) \(\newcommand{\what}{\widehat{\wvec}}\) \(\newcommand{\Sighat}{\widehat{\Sigma}}\) \(\newcommand{\lt}{<}\) \(\newcommand{\gt}{>}\) \(\newcommand{\amp}{&}\) \(\definecolor{fillinmathshade}{gray}{0.9}\)Computers have a reputation—not always deserved—for being “logical.” But fundamentally, deep down, they are made of logic in a very real sense. The building blocks of computers are logic gates, which are electronic components that compute the values of simple propositions such as \(p ∧ q and ¬p\). (Each gate is in turn built of even smaller electronic components called transistors, but this needn’t concern us here.)

A wire in a computer can be in one of two states, which we can think of as being on and off. These two states can be naturally associated with the Boolean values \(\mathbb{T}\) and \(\mathbb{F}\). When a computer computes, the multitude of wires inside it are turned on and off in patterns that are determined by certain rules. The rules involved can be most naturally expressed in terms of logic. A simple rule might be, “turn wire \(C\) on whenever wire \(A\) is on and wire \(B\) is on.” This rule can be implemented in hardware as an AND gate. An and gate is an electronic component with two input wires and one output wire, whose job is to turn its output on when both of its inputs are on and to turn its output off in any other case. If we associate “on” with \(\mathbb{T}\) and “off” with \(\mathbb{F}\), and if we give the names \(A\) and \(B\) to the inputs of the gate, then the gate computes the value of the logical expression \(A ∧ B\). In effect, \(A\) is a proposition with the meaning “the first input is on,” and \(B\) is a proposition with the meaning “the second input is on.” The and gate functions to ensure that the output is described by the proposition \(A ∧ B\). That is, the output is on if and only if the first input is on and the second input is on.

An OR gate is an electronic component with two inputs and one output which turns its output on if either (or both) of its inputs is on. If the inputs are given names \(A\) and \(B\), then the or gate computes the logical value of \(A∨B\). A NOT gate has one input and one output, and it turns its output off when the input is on and on when the input is off. If the input is named \(A\), then the not gate computes the value of \(¬A\).

Other types of logic gates are, of course, possible. Gates could be made to compute \(A → B\) or \(A⊕B\), for example. However, any computation that can be performed by logic gates can be done using only \(\small{AND}\), \(\small{OR}\), and \(\small{NOT}\) gates, as we will see below. (In practice, however, \(\small{NAND}\) gates and \(\small{NOR}\) gates, which compute the values of \(¬(A ∧ B)\) and \(¬(A ∨ B)\) respectively, are often used because they are easier to build from transistors than \(\small{AND} and \(\small{OR}\) gates.)

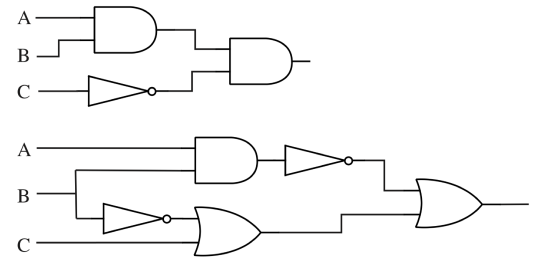

Figure 1.3: The standard symbols for the three basic logic gates, and a logic circuit that computes the value of the logical expression(¬A) ∧ (B ∨ ¬(A ∧ C)). The input wires to each logic gate are on the left, with the output wire on the right. Note that when wires cross each other in a diagram such as this, the wires don’t actually intersect unless there is a black circle at the point where they cross.

The three types of logic gates are represented by standard symbols, as shown in Figure 1.3. Since the inputs and outputs of logic gates are just wires carrying on/off signals, logic gates can be wired together by connecting outputs from some gates to inputs of other gates. The result is a logic circuit. An example is also shown in Figure 1.3.

The logic circuit in the figure has three inputs, labeled \(A, B,\) and \(C\). The circuit computes the value of the compound proposition \((¬A) ∧ (B ∨ ¬(A ∧C))\). That is, when \(A\) represents the proposition “the input wire labeled \(A\) is on,” and similarly for \(B\) and \(C\), then the output of the circuit is on if and only if the value of the compound proposition \((¬A) ∧ (B ∨ ¬(A ∧ C))\) is true.

Given any compound proposition made from the operators \(∧, ∨,\) and \(¬\), it is possible to build a logic circuit that computes the value of that proposition. The proposition itself is a blueprint for the circuit. As noted in Section 1.1, every logical operator that we have encountered can be expressed in terms of \(∧, ∨\), and \(¬\), so in fact every compound proposition that we know how to write can be computed by a logic circuit.

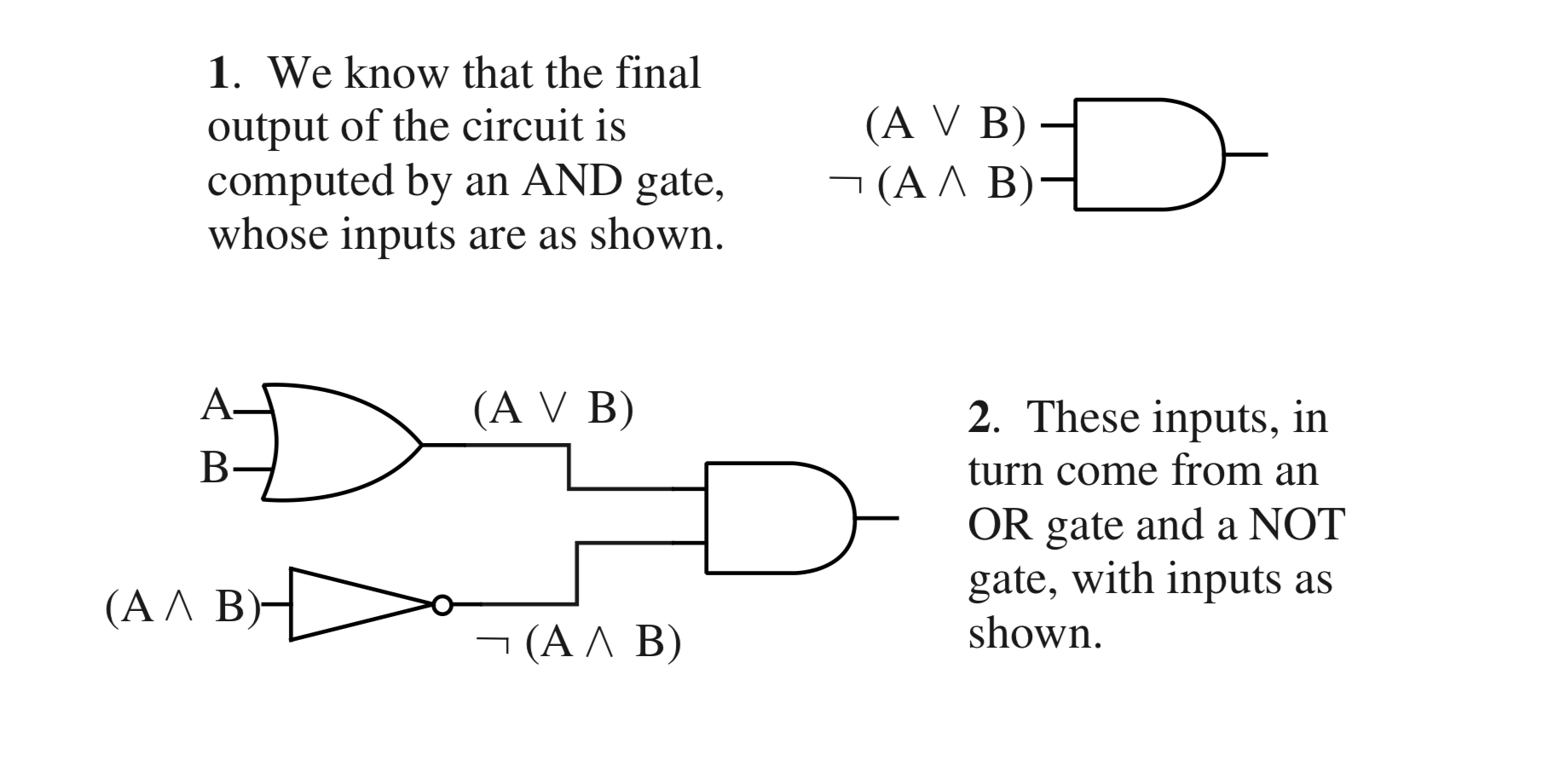

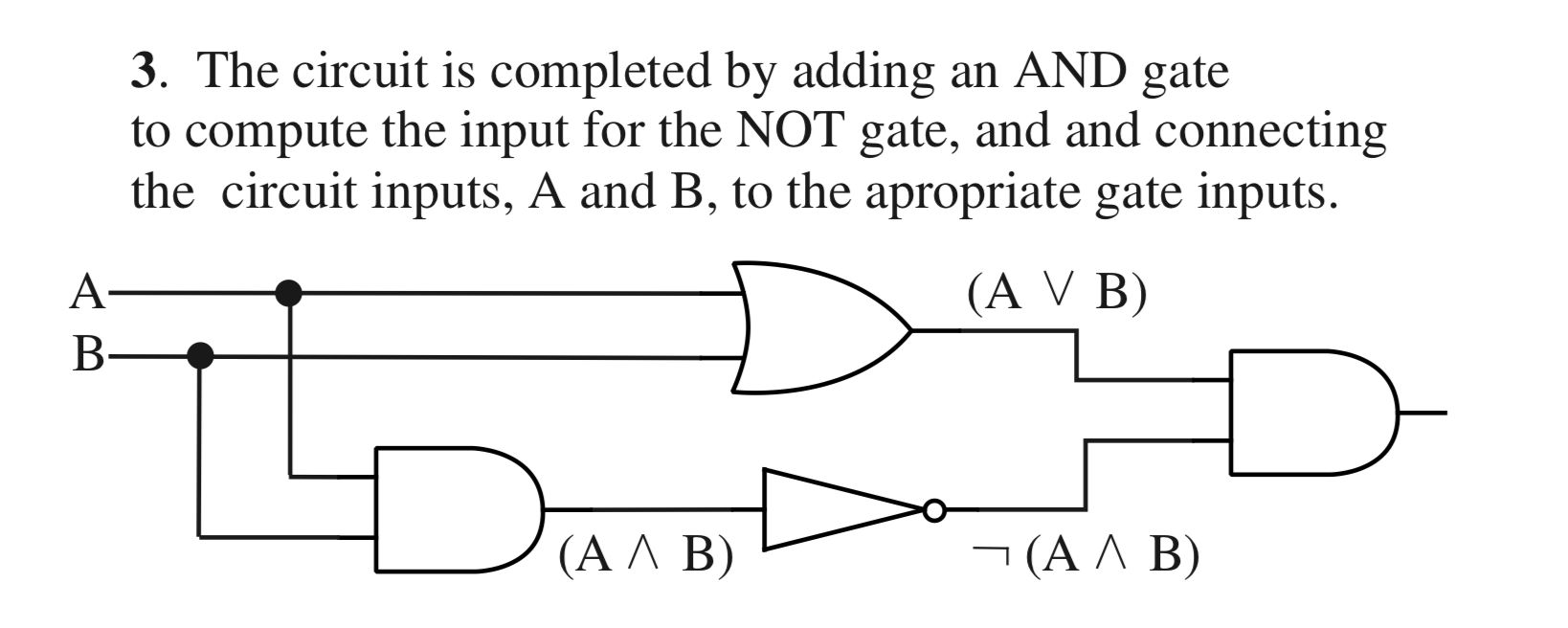

Given a proposition constructed from \(∧, ∨,\) and \(¬\) operators, it is easy to build a circuit to compute it. First, identify the main operator in the proposition—the one whose value will be computed last. Consider \((A ∨B) ∧ ¬(A ∧ B)\). This circuit has two input values, \(A\) and \(B\), which are represented by wires coming into the circuit. The circuit has an output wire that represents the computed value of the proposition. The main operator in \((A ∨ B) ∧ ¬(A ∧ B)\), is the first \(∧\), which computes the value of the expression as a whole by combining the values of the subexpressions \(A ∨ B\) and \(¬(A ∧ B)\). This \(∧\) operator corresponds to an and gate in the circuit that computes the final output of the circuit.

Once the main operator has been identified and represented as a logic gate, you just have to build circuits to compute the input or inputs to that operator. In the example, the inputs to the main and gate come from two subcircuits. One subcircuit computes the value of \(A ∨ B\) and the other computes the value of \(¬(A ∧ B)\). Building each subcircuit is a separate problem, but smaller than the problem you started with. Eventually, you’ll come to a gate whose input comes directly from one of the input wires—\(A\) or \(B\) in this case—instead of from a subcircuit.

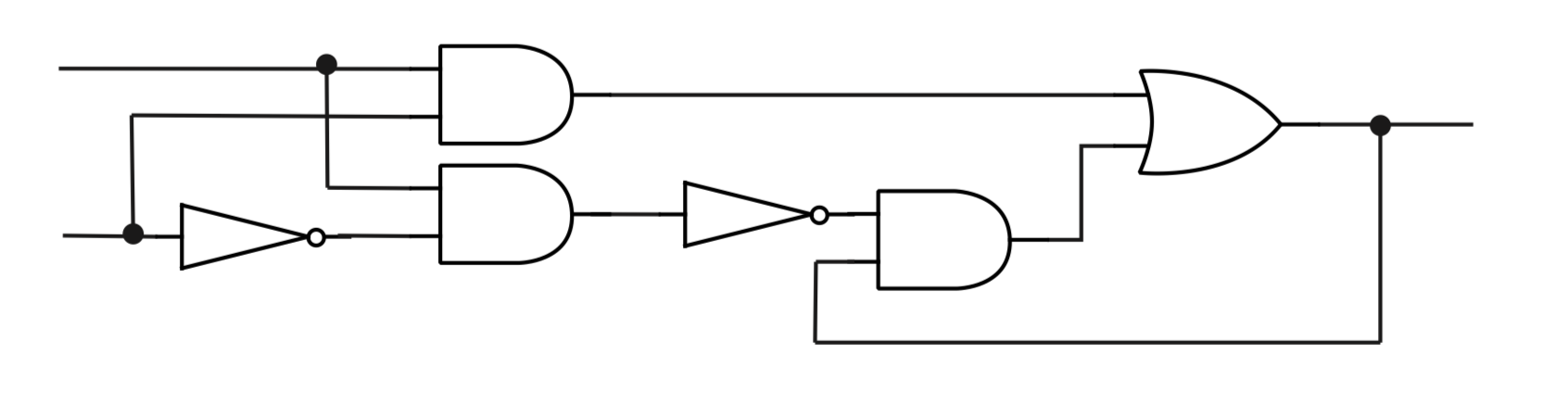

So, every compound proposition is computed by a logic circuit with one output wire. Is the reverse true? That is, given a logic circuit with one output, is there a proposition that expresses the value of the output in terms of the values of the inputs? Not quite. When you wire together some logic gates to make a circuit, there is nothing to stop you from introducing feedback loops. A feedback loop occurs when the output from a gate is connected—possibly through one or more intermediate gates—back to an input of the same gate. Figure 1.5 shows an example of a circuit with a feedback loop. Feedback loops cannot be described by compound propositions, basically because there is no place to start, no input to associate with a propositional variable. But feedback loops are the only thing that can go wrong. A logic circuit that does not contain any feedback loops is called a combinatorial logic circuit. Every combinatorial logic circuit with just one output computes the value of some compound proposition. The propositional variables in the compound proposition are just names associated with the input wires of the circuit. (Of course, if the circuit has more than one output, you can simply use a different proposition for each output.)

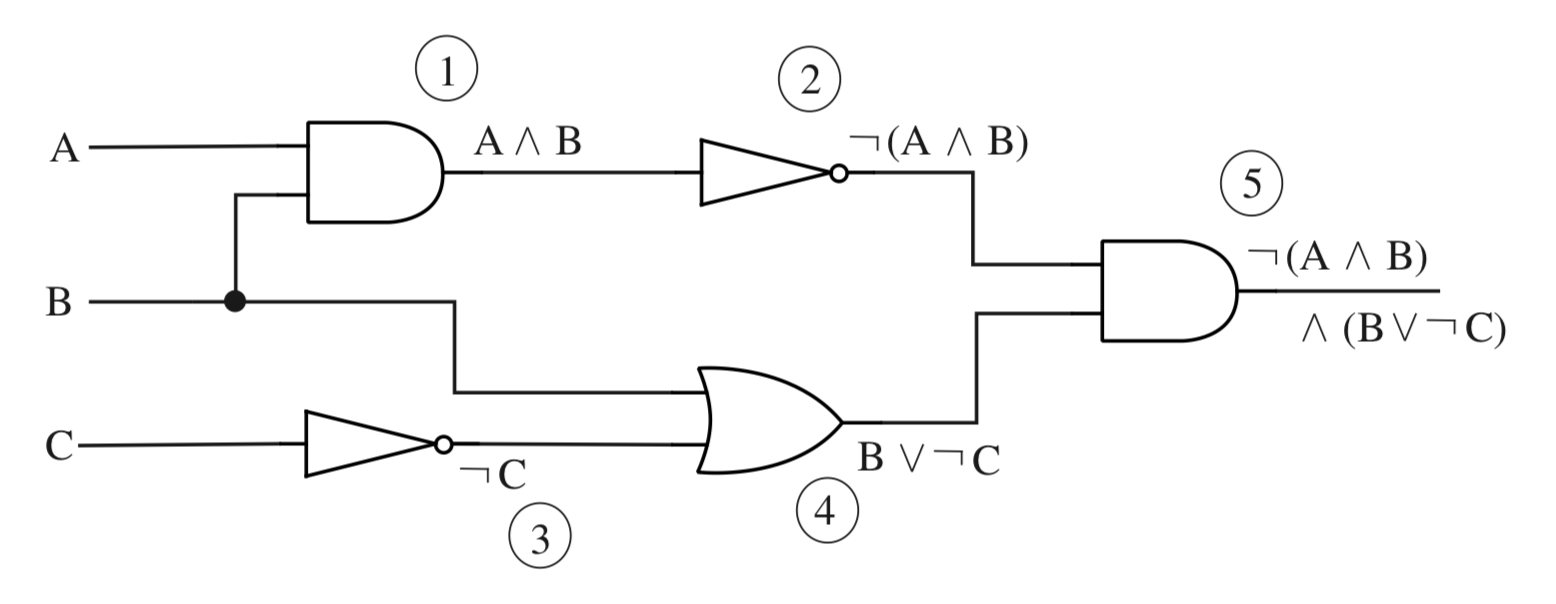

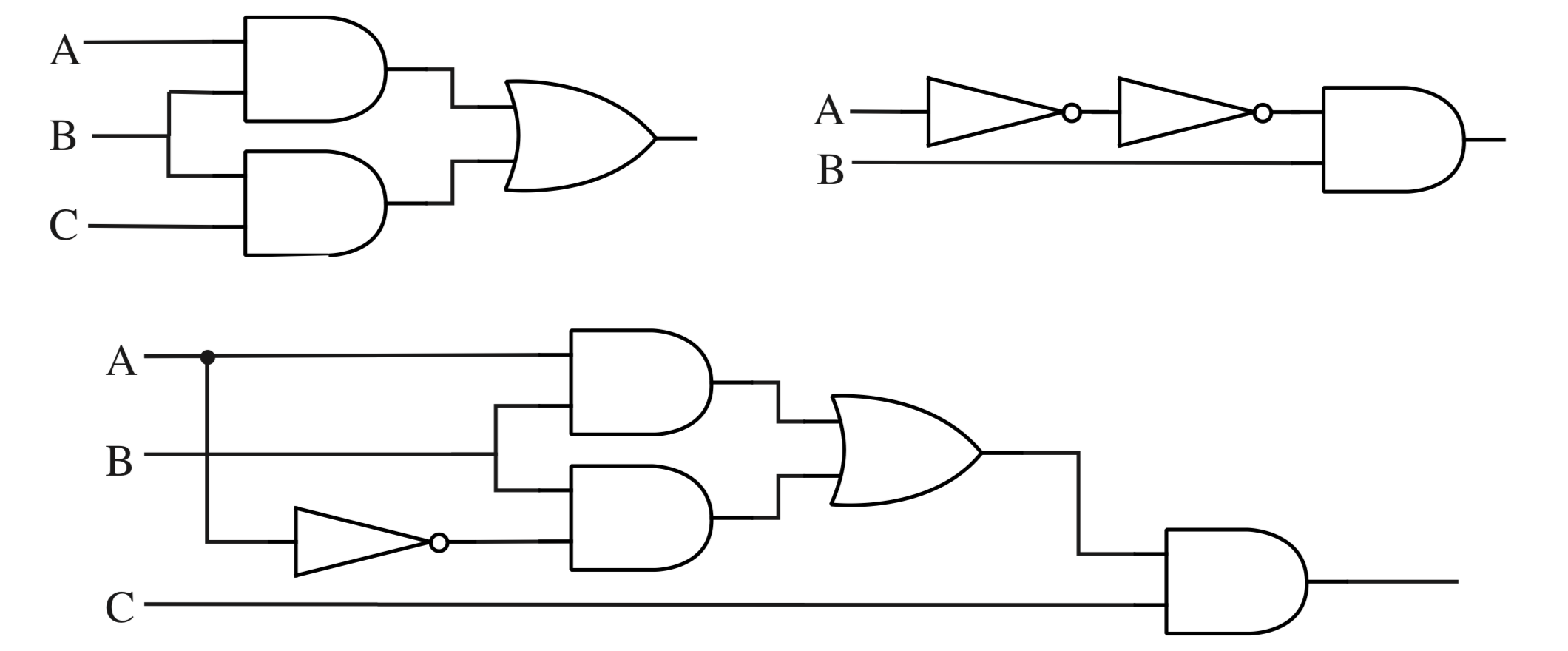

The key to understanding why this is true is to note that each wire in the circuit—not just the final output wire—represents the value of some proposition. Furthermore, once you know which proposition is represented by each input wire to a gate, it’s obvious what proposition is represented by the output: You just combine the input propositions with the appropriate \(∧, ∨,\) or \(¬\) operator, depending on what type of gate it is. To find the proposition associated with the final output, you just have to start from the inputs and move through the circuit, labeling the output wire of each gate with the proposition that it represents. Figure 1.6 illustrates this process.

Figure 1.4: Stages in the construction of a circuit that computes the compound proposition \((A ∨ B) ∧ ¬(A ∧ B)\).

So, compound propositions correspond naturally with combinatorial logic circuits. But we have still not quite settled the question of just how powerful these circuits and propositions are. We’ve looked at a number of logical operators and noted that they can all be expressed in terms of \(∧, ∨,\) and \(¬\). But might there be other operators that cannot be so expressed? Equivalently, might there be other types of logic gates—possibly with some large number of inputs—whose computations cannot be duplicated with \(\small{AND}\), \(\small{OR}\), and \(\small{NOT}\) gates? Any logical operator or logic gate computes a value for each possible combination of logical values of its inputs. We could always make a truth table showing the output for each possible combination of inputs. As it turns out, given any such truth table, it is possible to find a proposition, containing only the \(∧, ∨\), and \(¬\) operators, whose value for each combination of inputs is given precisely by that table.

Figure 1.5: This circuit contains a feedback loop, so it is not a combinatorial logic circuit. The feedback loop includes the and gate and the or gate on the right. This circuit does not compute the value of a compound proposition. This circuit does, however, play an important role in computer memories, since it can be used to store a logical value.

To see why this is true, it is useful to introduce a particular type of compound proposition. Define a simple term to be either a propositional variable or the negation of a propositional variable. A conjunction of simple terms would then consist of one or more simple terms put together with∧ operators. (A “conjunction of one simple term” is just a single simple term by itself. This might not make grammatical sense, but it’s the way mathematicians think.) Some examples of conjunctions of simple terms would be \(p∧q, p, ¬q\), and \(p∧¬r∧¬w∧s∧t\). Finally, we can take one or more such conjunctions and join them into a “disjunction of conjunctions of simple terms.” This is the type of compound proposition we need. We can avoid some redundancy by assuming that no propositional variable occurs more than once in a single conjunction (since \(p∧p\) can be replaced by \(p\), and if \(p\) and \(¬p\) both occur in a conjunction, then the value of the conjuction is false, and it can be eliminated.) We can also assume that the same conjunction does not occur twice in the disjunction.

Definition 1.5

A compound proposition is said to be in disjunctive normal form, or DNF, if it is a disjunction of conjunctions of simple terms, and if, furthermore, each propositional variable occurs at most once in each conjunction and each conjunction occurs at most once in the disjunction.

Using \(p, q, r, s, A,\) and \(B\) as propositional variables, here are a few examples of propositions that are in disjunctive normal form:

\((p ∧ q ∧ r) ∨ (p ∧ ¬q ∧ r ∧ s) ∨ (¬p ∧ ¬q)\)

\((p∧¬q)\)

\((A∧¬B)∨(¬A∧B)\)

\(p ∨ (¬p ∧ q) ∨ (¬p ∧ ¬q ∧ r) ∨ (¬p ∧ ¬q ∧ ¬r ∧ w)\)

Figure 1.6: Finding the proposition whose value is computed by a combinatorial logic circuit. Each wire in the circuit is labeled with the proposition that it represents. The numbering of the labels shows one of the orders in which they can be associated with the wires. The circuit as a whole computes the value of \(¬(A ∧ B) ∧ (B ∨ ¬C)\).

Propositions in DNF are just what we need to deal with input/output tables of the type that we have been discussing. Any such table can be computed by a proposition in disjunctive normal form. It follows that it is possible to build a circuit to compute that table using only \(\small{AND}\), \(\small{OR}\), and \(\small{NOT}\) gates.

Theorem 1.3

Consider a table that lists a logical output value for every combination of values of several propositional variables. Assume that at least one of the output values is true. Then there is a proposition containing those variables such that the value of the proposition for each possible combination of the values of the variables is precisely the value specified in the table. It is possible to choose the proposition to be in disjunctive normal form.

Proof. Consider any row in the table for which the output value is \(\mathbb{T}\). Form a conjunction of simple terms as follows: For each variable, \(p\), whose value is \(mathbb{T}\) in that row, include \(p\) itself in the conjunction; for each variable, \(q\), whose value is \(mathbb{F}\) in the row, include \(¬q\) in the conjunction. The value of this conjunction is \(mathbb{T}\) for the combination of variable values given in that row of the table, since each of the terms in the conjuction is true for that combination of variables. Furthermore, for any other possible combination of variable values, the value of the conjunction will be \(\mathbb{F}\), since at least one of the simple terms in the conjunction will be false.

Take the disjunction of all such conjunctions constructed in this way, for each row in the table where the output value is true. This disjunction has the value \(mathbb{T}\) if and only if one of the conjunctions that make it up has the value \(\mathbb{T}\)—and that is precisely when the output value specified by the table is \(\mathbb{T}\). So, this disjunction of conjunctions satisfies the requirements of the theorem.

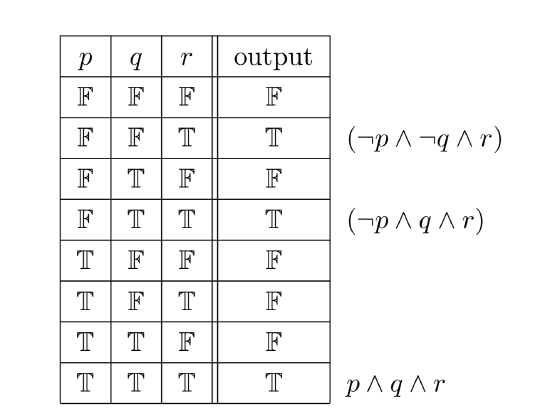

As an example, consider the table in Figure 1.7. This table specifies a desired output value for each possible combination of values for the propositional variables \(p, q,\) and \(r\). Look at the second row of the table, where the output value is true. According to the proof of the theorem, this row corresponds to the conjunction \((¬p ∧ ¬q ∧ r)\). This conjunction is true when \(p\) is false, \(q\) is false, and \(r\) is true; in all other cases it is false, since in any other case at least one of the terms \(¬p, ¬q\), or \(r\) is false. The other two rows where the output is true give two more conjunctions. The three conjunctions are combined to produce the DNF proposition \((¬p∧¬q∧r)∨(¬p∧q∧r)∨(p∧q∧r)\). This proposition computes all the output values specified in the table. Using this proposition as a blueprint, we get a logic circuit whose outputs match those given in the table.

Now, given any combinatorial logic circuit, there are many other circuits that have the same input/output behavior. When two circuits have the same input/output table, the compound propositions associated with the two circuits are logically equivalent. To put this another way, propositions that are logically equivalent produce circuits that have the same input/output behavior. As a practical matter, we will usually prefer the circuit that is simpler. The correspondence between circuits and propositions allows us to apply Boolean algebra to the simplification of circuits.

For example, consider the DNF proposition corresponding to the table in Figure 1.7. In \((¬p∧¬q∧r)∨(¬p∧q∧r)∨(p∧q∧r)\), we can factor \((q∧r)\) from the last two terms, giving \((¬p∧¬q∧r)∨((¬p∨p)∧(q∧r))\). Since \(¬p∨p ≡ \mathbb{T}\), and \(\mathbb{T}∧Q ≡ Q\) for any proposition \(Q\), this can be simplified to \((¬p ∧ ¬q ∧ r) ∨ (q ∧ r)\). Again, we can apply the distributive law to this to factor out an \(r\), giving \(((¬p ∧ ¬q) ∨ q) ∧ r)\). This compound proposition is logically equivalent to the one we started with, but implementing it in a circuit requires only five logic gates, instead of the ten required by the original proposition.\(^8\)

\(^8\small{No, I didn’t count wrong. There are eleven logical operators in the original expression, but you can get by with ten gates in the circuit: Use a single not gate to compute¬p, and connect the output of that gate to two different and gates. Reusing the output of a logic gate is an obvious way to simplify circuits that does not correspond to any operation on propositions.}\)

Figure 1.7: An input/output table specifying a desired output for each combination of values of the propositional variables p, q, and r. Each row where the output is T corresponds to a conjunction, shown next to that row in the table. The disjunction of these conjunctions is a proposition whose output values are precisely those specified by the table.

If you start with a circuit instead of a proposition, it is often possible to find the associated proposition, simplify it using Boolean algebra, and use the simplified proposition to build an equivalent circuit that is simpler than the original.

All this explains nicely the relationship between logic and circuits, but it doesn’t explain why logic circuits should be used in computers in the first place. Part of the explanation is found in the fact that computers use binary numbers. A binary number is a string of zeros and ones. Binary numbers are easy to represent in an electronic device like a computer: Each position in the number corresponds to a wire. When the wire is on, it represents one; when the wire is off, it represents zero. When we are thinking in terms of logic, the same states of the wire represent true and false, but either representation is just an interpretation of the reality, which is a wire that is on or off. The question is whether the interpretation is fruitful.

Once wires are thought of as representing zeros and ones, we can build circuits to do computations with binary numbers. Which computations?

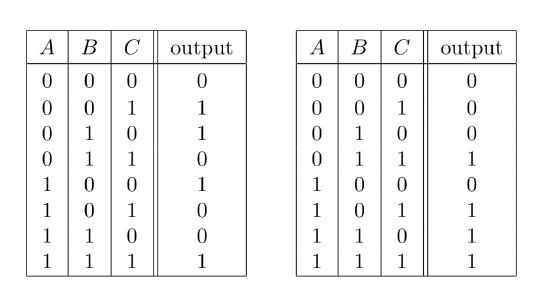

Figure 1.8: Input/output tables for the addition of three binary digits, \(A, B,\) and \(C\).

Any that we want! If we know what the answer should be for each combination of inputs, then by Theorem 1.3 we can build a circuit to compute that answer. Of course, the procedure described in that theorem is only practical for small circuits, but small circuits can be used as building blocks to make all the calculating circuits in a computer.

For example, let’s look at binary addition. To add two ordinary, decimal numbers, you line them up one on top of the other, and add the digits in each column. In each column, there might also be a carry from the previous column. To add up a column, you only need to remember a small number of rules, such as \(7+6+1 = 14\) and \(3+5+0 = 8\). For binary addition, it’s even easier, since the only digits are 0 and 1. There are only eight rules:

|

\(0 + 0 + 0 = 00\) \(0 + 0 + 1 = 01\) \(0 + 1 + 0 = 01\) \(0 + 1 + 1 = 10\) |

\(1 + 0 + 0 = 01\) \(1 + 0 + 1 = 10\) \(1 + 1 + 0 = 10\) \(1 + 1 + 1 = 11\) |

Here, I’ve written each sum using two digits. In a multi-column addition, one of these digits is carried over to the next column. Here, we have a calculation that has three inputs and two outputs. We can make an input/output table for each of the two outputs. The tables are shown in Figure 1.8. We know that these tables can be implemented as combinatorial circuits, so we know that circuits can add binary numbers. To add multi-digit binary numbers, we just need one copy of the basic addition circuit for each column in the sum.

Exercises

- Using only and, or, and not gates, draw circuits that compute the value of each of the propositions A → B, A ↔ B, and A ⊕ B.

- For each of the following propositions, find a combinatorial logic circuit that computes that proposition:

a) \(A∧(B∨¬C)\)

b) \((p∧q)∧¬(p∧¬q)\)

c) \((p∨q∨r)∧(¬p∨¬q∨¬r)\)

d) \(¬(A∧(B∨C))∨(B∧¬A)\) - Find the compound proposition computed by each of the following circuits:

- This section describes a method for finding the compound proposition com- puted by any combinatorial logic circuit. This method fails if you try to apply it to a circuit that contains a feedback loop. What goes wrong? Give an example.

- Show that every compound proposition which is not a contradiction is equiva- lent to a proposition in disjunctive normal form. (Note: We can eliminate the restriction that the compound proposition is not a contradiction by agreeing that “F” counts as a proposition in disjunctive normal form. F is logically equivalent to any contradiction.)

- A proposition in conjunctive normal form (CNF) is a conjunction of dis- junctions of simple terms (with the proviso, as in the definition of DNF that a single item counts as a conjunction or disjunction). Show that every com- pound proposition which is not a tautology is logically equivalent to a com- pound proposition in conjunctive normal form. (Hint: What happens if you take the negation of a DNF proposition and apply DeMorgan’s Laws?)

- A proposition in conjunctive normal form (CNF) is a conjunction of dis- junctions of simple terms (with the proviso, as in the definition of DNF that a single item counts as a conjunction or disjunction). Show that every com- pound proposition which is not a tautology is logically equivalent to a com- pound proposition in conjunctive normal form. (Hint: What happens if you take the negation of a DNF proposition and apply DeMorgan’s Laws?)

- Design circuits to implement the input/output tables for addition, as given in Figure 1.8. Try to make your circuits as simple as possible. (The circuits that are used in real computers for this purpose are more simplified than the ones you will probably come up with, but the general approach of using logic to design computer circuits is valid.)