6.5: Exponential Averages and Recursive Filters

- Page ID

- 9986

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\( \newcommand{\dsum}{\displaystyle\sum\limits} \)

\( \newcommand{\dint}{\displaystyle\int\limits} \)

\( \newcommand{\dlim}{\displaystyle\lim\limits} \)

\( \newcommand{\id}{\mathrm{id}}\) \( \newcommand{\Span}{\mathrm{span}}\)

( \newcommand{\kernel}{\mathrm{null}\,}\) \( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\) \( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\) \( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\id}{\mathrm{id}}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\kernel}{\mathrm{null}\,}\)

\( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\)

\( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\)

\( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\) \( \newcommand{\AA}{\unicode[.8,0]{x212B}}\)

\( \newcommand{\vectorA}[1]{\vec{#1}} % arrow\)

\( \newcommand{\vectorAt}[1]{\vec{\text{#1}}} % arrow\)

\( \newcommand{\vectorB}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vectorC}[1]{\textbf{#1}} \)

\( \newcommand{\vectorD}[1]{\overrightarrow{#1}} \)

\( \newcommand{\vectorDt}[1]{\overrightarrow{\text{#1}}} \)

\( \newcommand{\vectE}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash{\mathbf {#1}}}} \)

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\(\newcommand{\longvect}{\overrightarrow}\)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\(\newcommand{\avec}{\mathbf a}\) \(\newcommand{\bvec}{\mathbf b}\) \(\newcommand{\cvec}{\mathbf c}\) \(\newcommand{\dvec}{\mathbf d}\) \(\newcommand{\dtil}{\widetilde{\mathbf d}}\) \(\newcommand{\evec}{\mathbf e}\) \(\newcommand{\fvec}{\mathbf f}\) \(\newcommand{\nvec}{\mathbf n}\) \(\newcommand{\pvec}{\mathbf p}\) \(\newcommand{\qvec}{\mathbf q}\) \(\newcommand{\svec}{\mathbf s}\) \(\newcommand{\tvec}{\mathbf t}\) \(\newcommand{\uvec}{\mathbf u}\) \(\newcommand{\vvec}{\mathbf v}\) \(\newcommand{\wvec}{\mathbf w}\) \(\newcommand{\xvec}{\mathbf x}\) \(\newcommand{\yvec}{\mathbf y}\) \(\newcommand{\zvec}{\mathbf z}\) \(\newcommand{\rvec}{\mathbf r}\) \(\newcommand{\mvec}{\mathbf m}\) \(\newcommand{\zerovec}{\mathbf 0}\) \(\newcommand{\onevec}{\mathbf 1}\) \(\newcommand{\real}{\mathbb R}\) \(\newcommand{\twovec}[2]{\left[\begin{array}{r}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\ctwovec}[2]{\left[\begin{array}{c}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\threevec}[3]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\cthreevec}[3]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\fourvec}[4]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\cfourvec}[4]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\fivevec}[5]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\cfivevec}[5]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\mattwo}[4]{\left[\begin{array}{rr}#1 \amp #2 \\ #3 \amp #4 \\ \end{array}\right]}\) \(\newcommand{\laspan}[1]{\text{Span}\{#1\}}\) \(\newcommand{\bcal}{\cal B}\) \(\newcommand{\ccal}{\cal C}\) \(\newcommand{\scal}{\cal S}\) \(\newcommand{\wcal}{\cal W}\) \(\newcommand{\ecal}{\cal E}\) \(\newcommand{\coords}[2]{\left\{#1\right\}_{#2}}\) \(\newcommand{\gray}[1]{\color{gray}{#1}}\) \(\newcommand{\lgray}[1]{\color{lightgray}{#1}}\) \(\newcommand{\rank}{\operatorname{rank}}\) \(\newcommand{\row}{\text{Row}}\) \(\newcommand{\col}{\text{Col}}\) \(\renewcommand{\row}{\text{Row}}\) \(\newcommand{\nul}{\text{Nul}}\) \(\newcommand{\var}{\text{Var}}\) \(\newcommand{\corr}{\text{corr}}\) \(\newcommand{\len}[1]{\left|#1\right|}\) \(\newcommand{\bbar}{\overline{\bvec}}\) \(\newcommand{\bhat}{\widehat{\bvec}}\) \(\newcommand{\bperp}{\bvec^\perp}\) \(\newcommand{\xhat}{\widehat{\xvec}}\) \(\newcommand{\vhat}{\widehat{\vvec}}\) \(\newcommand{\uhat}{\widehat{\uvec}}\) \(\newcommand{\what}{\widehat{\wvec}}\) \(\newcommand{\Sighat}{\widehat{\Sigma}}\) \(\newcommand{\lt}{<}\) \(\newcommand{\gt}{>}\) \(\newcommand{\amp}{&}\) \(\definecolor{fillinmathshade}{gray}{0.9}\)Suppose we try to extend our method for computing finite moving averages to infinite moving averages of the form

\[\begin{align}

x_{n} &= \qquad \qquad \qquad \sum_{k=0}^{\infty} w_{k} u_{n-k} \nonumber\\

&=w_{0} u_{n}+w_{1} u_{n-1}+\cdots+w_{1000} u_{n-1000}+\cdots

\end{align} \nonumber \]

In general, this moving average would require infinite memory for the weighting coefficients \(w_{0}, w_{1}, \ldots\) and for the inputs \(u_{n}, u_{n-1}, \ldots\). Furthermore, the hardware for multiplying wkun−kwkun-k would have to be infinitely fast to compute the infinite moving average in finite time. All of this is clearly fanciful and implausible (not to mention impossible). But what if the weights take the exponential form

\[w_{k}= \begin{cases}0, & k<0 \\ w_{0} a^{k}, & k \geq 0 ?\end{cases} \nonumber \]

Does any simplification result? There is hope because the weighting sequence obeys the recursion

\[w_{k}= \begin{cases}0, & k<0 \\ w_{0}, & k=0 \\ a w_{k-1} & k \geq 1\end{cases} \nonumber \]

This recursion may be rewritten as follows, for \(k \geq 1\):

\[w_{k}-a w_{k-1}=0, k \geq 1 \nonumber \]

Let's now manipulate the infinite moving average and use the recursion for the weights to see what happens. You must follow every step:

\[\begin{align}

x_{n} &=\sum_{k=0}^{\infty} w_{k} u_{n-k} \nonumber \\

&=\quad \sum_{k=1}^{\infty} w_{k} u_{n-k}+w_{0} u_{n} \nonumber\\

&=\sum_{k=1}^{\infty} a w_{k-1} u_{n-k}+w_{0} u_{n} \nonumber\\

&=a \sum_{m=0}^{\infty} w_{m} u_{n-1-m}+w_{0} u_{n} \nonumber\\

&=a x_{n-1}+w_{0} u_{n} .

\end{align} \nonumber \]

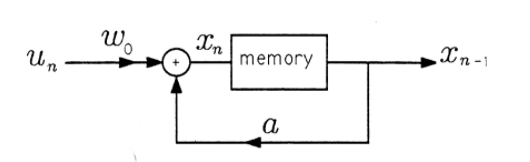

This result is fundamentally important because it says that the output of the infinite exponential moving average may be computed by scaling the previous output \(x_{n-1}\) by the constant \(a\), scaling the new input \(u_n\) by \(w_0\), and adding. Only three memory locations must be allocated: one for \(w_0\), one for \(a\), and one for \(x_{n-1}\). Only two multiplies must be implemented: one for \(ax_{n-1}\) and one for \(w_0u_n\). A diagram of the recursion is given in Figure 1. In this recursion, the old value of the exponential moving average, \(x_{n-1}\), is scaled by \(a\) and added to \(w_0u_n\) to produce the new exponential moving average \(x_n\). This new value is stored in memory, where it becomes \(x_{n-1}\) in the next step of the recursion, and so on.

Try to extend the recursion of the previous paragraphs to the weighted average

\(x_{n}=\sum_{k=0}^{N-1} a^{k} u_{n-k} .\)

What goes wrong?

Compute the output of the exponential moving average \(x_{n}=a x_{n-1}+w_{0} u_{n}\) when the input is

\(u_{n}= \begin{cases}0, & n<0 \\ u, & n \geq 0\end{cases}\)

Plot your result versus \(n\).

Compute \(w_0\) in the exponential weighting sequence

\(w_{n}= \begin{cases}0, & n<0 \\ a^{n} w_{0}, & n \geq 0\end{cases}\)

to make the weighting sequence a valid window. (This is a special case of Exercise 3 from Filtering: Moving Averages.) Assume \(−1<a<1\)