7.2: The Communication Paradigm

- Page ID

- 9990

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\( \newcommand{\id}{\mathrm{id}}\) \( \newcommand{\Span}{\mathrm{span}}\)

( \newcommand{\kernel}{\mathrm{null}\,}\) \( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\) \( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\) \( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\id}{\mathrm{id}}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\kernel}{\mathrm{null}\,}\)

\( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\)

\( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\)

\( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\) \( \newcommand{\AA}{\unicode[.8,0]{x212B}}\)

\( \newcommand{\vectorA}[1]{\vec{#1}} % arrow\)

\( \newcommand{\vectorAt}[1]{\vec{\text{#1}}} % arrow\)

\( \newcommand{\vectorB}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vectorC}[1]{\textbf{#1}} \)

\( \newcommand{\vectorD}[1]{\overrightarrow{#1}} \)

\( \newcommand{\vectorDt}[1]{\overrightarrow{\text{#1}}} \)

\( \newcommand{\vectE}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash{\mathbf {#1}}}} \)

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\(\newcommand{\avec}{\mathbf a}\) \(\newcommand{\bvec}{\mathbf b}\) \(\newcommand{\cvec}{\mathbf c}\) \(\newcommand{\dvec}{\mathbf d}\) \(\newcommand{\dtil}{\widetilde{\mathbf d}}\) \(\newcommand{\evec}{\mathbf e}\) \(\newcommand{\fvec}{\mathbf f}\) \(\newcommand{\nvec}{\mathbf n}\) \(\newcommand{\pvec}{\mathbf p}\) \(\newcommand{\qvec}{\mathbf q}\) \(\newcommand{\svec}{\mathbf s}\) \(\newcommand{\tvec}{\mathbf t}\) \(\newcommand{\uvec}{\mathbf u}\) \(\newcommand{\vvec}{\mathbf v}\) \(\newcommand{\wvec}{\mathbf w}\) \(\newcommand{\xvec}{\mathbf x}\) \(\newcommand{\yvec}{\mathbf y}\) \(\newcommand{\zvec}{\mathbf z}\) \(\newcommand{\rvec}{\mathbf r}\) \(\newcommand{\mvec}{\mathbf m}\) \(\newcommand{\zerovec}{\mathbf 0}\) \(\newcommand{\onevec}{\mathbf 1}\) \(\newcommand{\real}{\mathbb R}\) \(\newcommand{\twovec}[2]{\left[\begin{array}{r}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\ctwovec}[2]{\left[\begin{array}{c}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\threevec}[3]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\cthreevec}[3]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\fourvec}[4]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\cfourvec}[4]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\fivevec}[5]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\cfivevec}[5]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\mattwo}[4]{\left[\begin{array}{rr}#1 \amp #2 \\ #3 \amp #4 \\ \end{array}\right]}\) \(\newcommand{\laspan}[1]{\text{Span}\{#1\}}\) \(\newcommand{\bcal}{\cal B}\) \(\newcommand{\ccal}{\cal C}\) \(\newcommand{\scal}{\cal S}\) \(\newcommand{\wcal}{\cal W}\) \(\newcommand{\ecal}{\cal E}\) \(\newcommand{\coords}[2]{\left\{#1\right\}_{#2}}\) \(\newcommand{\gray}[1]{\color{gray}{#1}}\) \(\newcommand{\lgray}[1]{\color{lightgray}{#1}}\) \(\newcommand{\rank}{\operatorname{rank}}\) \(\newcommand{\row}{\text{Row}}\) \(\newcommand{\col}{\text{Col}}\) \(\renewcommand{\row}{\text{Row}}\) \(\newcommand{\nul}{\text{Nul}}\) \(\newcommand{\var}{\text{Var}}\) \(\newcommand{\corr}{\text{corr}}\) \(\newcommand{\len}[1]{\left|#1\right|}\) \(\newcommand{\bbar}{\overline{\bvec}}\) \(\newcommand{\bhat}{\widehat{\bvec}}\) \(\newcommand{\bperp}{\bvec^\perp}\) \(\newcommand{\xhat}{\widehat{\xvec}}\) \(\newcommand{\vhat}{\widehat{\vvec}}\) \(\newcommand{\uhat}{\widehat{\uvec}}\) \(\newcommand{\what}{\widehat{\wvec}}\) \(\newcommand{\Sighat}{\widehat{\Sigma}}\) \(\newcommand{\lt}{<}\) \(\newcommand{\gt}{>}\) \(\newcommand{\amp}{&}\) \(\definecolor{fillinmathshade}{gray}{0.9}\)A paradigm is a pattern of ideas that form the foundation for a body of knowledge. A paradigm for (tele-) communication theory is a pattern of basic building blocks that may be applied to the dual problems of (i) reliably transmitting information from source to receiver at high speed or (ii) reliably storing information from source to memory at high density. High-speed communication permits us to accommodate many low-rate sources (such as audio) or one high-rate source (such as video). High-density storage permits us to store a large amount of information in a small space. For example, a typical 1.2 Mbyte floppy disc stores \(9.6×10^6\) bits of information, whereas a typical CD stores about \(2×10^9\) bits, enough for one hour's worth of high-quality sound.

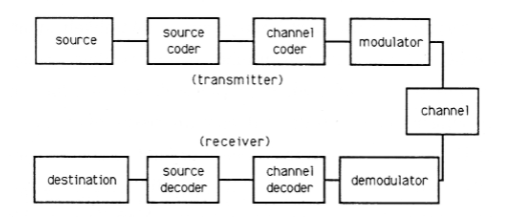

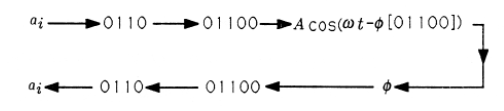

Figure 1 illustrates the basic building blocks that apply to any problem in the theory of (tele-) communication. The source is an arbitrary source of information. It can be the time-varying voltage at the output of a vibration sensor (such as an integrating accelerometer for measuring motion or a microphone for measuring sound pressure); it can be the charges stored in the CCD array of a solid-state camera; it can be the addresses generated from a sequence of keystrokes at a computer terminal; it can be a sequence of instructions in a computer program. The source coder is a device for turning primitive source outputs into more efficient representations. For example, in a recording studio, the source coder would convert analog voltages into digital approximations using an A/D converter; a fancy source coder would use a fancy A/D converter that finely quantized likely analog values and crudely quantized unlikely values. If the source is a source of discrete symbols like letters and numbers, then a fancy source code would assign short binary sequences to likely symbols (such as \(e\)) and long binary sequences to unlikely symbols (such as \(z\)). The channel coder adds “redundant bits” to the binary output of the source coder so that errors of transmission or storage may be detected and corrected. In the simplest example, a binary string of the form 01001001 would have an extra bit of 1 added to give even parity (an even number of l's) to the string; the string 10110111 would have an extra bit of 0 added to preserve the even parity. If one bit error is introduced in the channel, then the parity is odd and the receiver knows that an error has occurred. The modulator takes outputs of the channel coder, a stream of binary digits, and constructs an analog waveform that represents a block of bits. For example, in a 9600 baud Modem, five bits are used to determine one of \(2^5=32\) phases that are used to modulate the signal Acos(ωt+φ)Acos(ωt+φ). Each possible string of five bits has its own personalized phase, \(\phi\), and this phase can be determined at the receiver. The signal \(A \cos (\omega t+\phi)\). \(A \cos (\omega t+\phi)\) is an analog signal that may be transmitted over a channel (such as a telephone line, a microwave link, or a fiber-optic cable). The channel has a finite bandwidth, meaning that it distorts signals, and it is subject to noise or interference from other electromagnetic radiation. Therefore transmitted information arrives at the demodulator in imperfect form. The demodulator uses filters matched to the modulated signals to demodulate the phase and look up the corresponding bit stream. The channel decoder converts the coded bit stream into the information bit stream, and the source decoder looks up the corresponding symbol. This sequence of steps is illustrated symbolically in Figure 7.3.

In your subsequent courses on communication theory you will study each block of Figure 1 in detail. You will find that every source of information has a characteristic complexity, called entropy, that determines the minimum rate at which bits must be generated in order to represent the source. You will also find that every communication channel has a characteristic tolerance for bits, called channel capacity. This capacity depends on signal-to-noise ratio and bandwidth. When the channel capacity exceeds the source entropy, then you can transmit information reliably; if it does not, then you cannot.