4.1: Computer Software

- Page ID

- 59128

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\( \newcommand{\id}{\mathrm{id}}\) \( \newcommand{\Span}{\mathrm{span}}\)

( \newcommand{\kernel}{\mathrm{null}\,}\) \( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\) \( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\) \( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\id}{\mathrm{id}}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\kernel}{\mathrm{null}\,}\)

\( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\)

\( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\)

\( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\) \( \newcommand{\AA}{\unicode[.8,0]{x212B}}\)

\( \newcommand{\vectorA}[1]{\vec{#1}} % arrow\)

\( \newcommand{\vectorAt}[1]{\vec{\text{#1}}} % arrow\)

\( \newcommand{\vectorB}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vectorC}[1]{\textbf{#1}} \)

\( \newcommand{\vectorD}[1]{\overrightarrow{#1}} \)

\( \newcommand{\vectorDt}[1]{\overrightarrow{\text{#1}}} \)

\( \newcommand{\vectE}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash{\mathbf {#1}}}} \)

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\(\newcommand{\avec}{\mathbf a}\) \(\newcommand{\bvec}{\mathbf b}\) \(\newcommand{\cvec}{\mathbf c}\) \(\newcommand{\dvec}{\mathbf d}\) \(\newcommand{\dtil}{\widetilde{\mathbf d}}\) \(\newcommand{\evec}{\mathbf e}\) \(\newcommand{\fvec}{\mathbf f}\) \(\newcommand{\nvec}{\mathbf n}\) \(\newcommand{\pvec}{\mathbf p}\) \(\newcommand{\qvec}{\mathbf q}\) \(\newcommand{\svec}{\mathbf s}\) \(\newcommand{\tvec}{\mathbf t}\) \(\newcommand{\uvec}{\mathbf u}\) \(\newcommand{\vvec}{\mathbf v}\) \(\newcommand{\wvec}{\mathbf w}\) \(\newcommand{\xvec}{\mathbf x}\) \(\newcommand{\yvec}{\mathbf y}\) \(\newcommand{\zvec}{\mathbf z}\) \(\newcommand{\rvec}{\mathbf r}\) \(\newcommand{\mvec}{\mathbf m}\) \(\newcommand{\zerovec}{\mathbf 0}\) \(\newcommand{\onevec}{\mathbf 1}\) \(\newcommand{\real}{\mathbb R}\) \(\newcommand{\twovec}[2]{\left[\begin{array}{r}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\ctwovec}[2]{\left[\begin{array}{c}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\threevec}[3]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\cthreevec}[3]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\fourvec}[4]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\cfourvec}[4]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\fivevec}[5]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\cfivevec}[5]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\mattwo}[4]{\left[\begin{array}{rr}#1 \amp #2 \\ #3 \amp #4 \\ \end{array}\right]}\) \(\newcommand{\laspan}[1]{\text{Span}\{#1\}}\) \(\newcommand{\bcal}{\cal B}\) \(\newcommand{\ccal}{\cal C}\) \(\newcommand{\scal}{\cal S}\) \(\newcommand{\wcal}{\cal W}\) \(\newcommand{\ecal}{\cal E}\) \(\newcommand{\coords}[2]{\left\{#1\right\}_{#2}}\) \(\newcommand{\gray}[1]{\color{gray}{#1}}\) \(\newcommand{\lgray}[1]{\color{lightgray}{#1}}\) \(\newcommand{\rank}{\operatorname{rank}}\) \(\newcommand{\row}{\text{Row}}\) \(\newcommand{\col}{\text{Col}}\) \(\renewcommand{\row}{\text{Row}}\) \(\newcommand{\nul}{\text{Nul}}\) \(\newcommand{\var}{\text{Var}}\) \(\newcommand{\corr}{\text{corr}}\) \(\newcommand{\len}[1]{\left|#1\right|}\) \(\newcommand{\bbar}{\overline{\bvec}}\) \(\newcommand{\bhat}{\widehat{\bvec}}\) \(\newcommand{\bperp}{\bvec^\perp}\) \(\newcommand{\xhat}{\widehat{\xvec}}\) \(\newcommand{\vhat}{\widehat{\vvec}}\) \(\newcommand{\uhat}{\widehat{\uvec}}\) \(\newcommand{\what}{\widehat{\wvec}}\) \(\newcommand{\Sighat}{\widehat{\Sigma}}\) \(\newcommand{\lt}{<}\) \(\newcommand{\gt}{>}\) \(\newcommand{\amp}{&}\) \(\definecolor{fillinmathshade}{gray}{0.9}\)Introduction to Software

The second component of an information system is software. Software is the means to take a user’s data and process it to perform its intended action. Software translates what users want to do into a set of instructions that tell the hardware what to do. A set of instructions is also called a computer program. For example, when a user presses the letter ‘A” key on the keyboard when using a word processing app, it is the word processing software that tells the hardware that the user pressed the key ‘A’ on the keyboard and fetches the image of the letter A to display on the screen as feedback to the user that the user’s data is received correctly.

Software is created through the process of programming. We will cover the creation of software in this chapter. In essence, hardware is the machine, and software is the intelligence that tells the hardware what to do. Without software, the hardware would not be functional.

Types of Software

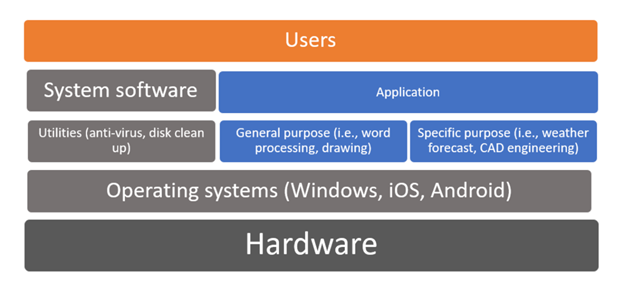

The software component can be broadly divided into two categories: system software and application software.

The system software is a collection of computer programs that provide a software platform for other software programs. It also insulates the hardware's specifics from the applications and users as much as possible by managing the hardware and the networks. It consists of

- Operating System

- Utilities

Application software is a computer program that delivers a specific activity for the users (i.e., create a document, draw a picture). It can be for either

- a general-purpose (i.e., Microsoft Word, Google doc) or

- for a particular purpose (i.e., weather forecast, CAD engineering)

System Software

Operating Systems

The operating system provides several essential functions, including:

- Managing the hardware resources of the computer

- Providing the user-interface components

- Providing a platform for software developers to write applications.

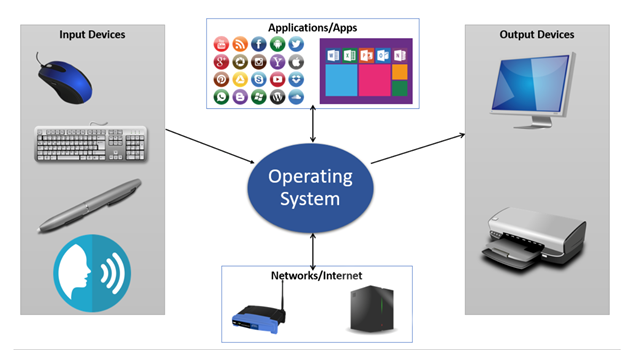

All computing devices run an operating system (OS), a key component of the system software. An OS is a set of programs that coordinate hardware components and other programs and acts as an interface with application software and networks.

Early personal-computer operating systems were simple by today’s standards; they did not provide multitasking and required the user to type commands to initiate an action. The amount of memory that early operating systems could handle was limited as well, making large programs impractical to run. The most popular of the early operating systems was IBM’s Disk Operating System, or DOS, which was actually developed for them by Microsoft.

For personal computers, some of the most popular operating systems today are Microsoft’s Windows, Apple’s OS X, Chrome, and different versions of Linux. Figure 5.1.2 displays how the operating system accepts input from various input devices such as a mouse, a keyboard, a digital pen, or a speech recognition, outputs to various output devices such as screen monitor or a printer; acts an intermediary between applications and apps, and access the internet via network devices such as a router or a web server.

Figure \(\PageIndex{2}\) : Operating System Role. Image by Ly-Huong T. Pham is licensed by CC BY NC

In 1984, Apple introduced the Macintosh computer, featuring an operating system with a graphical user interface, now known as macOS. Apple has different names for its OS running on different devices such as iOS, iPadOS, watchOS, and tvOS.

In 1986, as a response to Apple, Microsoft introduced the Microsoft Windows Operating Systems, commonly known as Windows, as a new graphical user interface for their then command-based operating system, known as MS-DOS, which was developed for IBM’s Disk Operating System or IBM-DOS. By the 1990s, Windows dominated the desktop personal computers market as the top OS and overtaken Apple’s OS.

Since 1990, both Apple and Microsoft have released many new versions of their operating systems, with each release adding the ability to process more data at once and access more memory. Features such as multitasking, virtual memory, and voice input have become standard features of both operating systems.

A third personal-computer operating system family that is gaining in popularity is Linux. Linux is a version of the Unix operating system that runs on a personal computer. Unix is an operating system used primarily by scientists and engineers on larger minicomputers. These computers, however, are costly, and software developer Linus Torvalds wanted to find a way to make Unix run on less expensive personal computers: Linux was the result. Linux has many variations and now powers a large percentage of web servers in the world. It is also an example of open-source software, a topic we will cover later in this chapter.

Figure \(\PageIndex{3}\) : Tux, Linux’s Mascot. Image by lewing@isc.tamu.edu Larry Ewing and The GIMP is licensed under Creative Commons CC0 1.0 Universal Public Domain Dedication

Figure \(\PageIndex{3}\) : Tux, Linux’s Mascot. Image by lewing@isc.tamu.edu Larry Ewing and The GIMP is licensed under Creative Commons CC0 1.0 Universal Public Domain DedicationSmartphones and tablets run operating systems as well, such as Apple’s iOSTM, Google’s Android (introduced in 2007) Microsoft’s Windows Mobile, and Blackberry. It is based on the Linux kernel, and a consortium of developers developed other open-source software. Android quickly became the top OS for mobile devices and overtook Microsoft.

Operating systems have continuously improved with more and more features to increase speed and performance to process more data at once and access more memory. Features such as multitasking, virtual memory, and voice input have become standard features of both operating systems.

All computing devices run an operating system, as shown in the below table. The most popular operating systems are Microsoft’s Windows, Apple’s operating system, and different Linux versions for personal computers. Smartphones and tablets run operating systems as well, such as Apple’s iOS and Google’s Android and Chrome.

|

Operating Systems |

Desktop |

Mobile |

|---|---|---|

|

Microsoft Windows |

Windows 10 |

Windows 10 |

|

Apple OS |

Mac OS |

iOS |

|

Various versions of Linux |

Ubuntu |

Android (Google) |

According to netmarketshare.com (2020), from August 2019 to August 2020, Windows still retains the desktop's dominant position with over 87% market share. Still, it is losing in the mobile market share, to Android with over 70% market share, followed by Apple’s iOS with over 28% market share.

Sidebar: Why Is Microsoft Software So Dominant in the Business World?

Almost all businesses used IBM mainframe computers back in the 1960s and 1970s. These same businesses shied away from personal computers until IBM released the PC in 1981. Initially, business decisions were low-risk decisions since IBM was dominant, a safe choice. Another reason might be that once a business selects an operating system as the standard solution, it will invest in additional software, hardware, and services built for this OS. The switching cost to another OS becomes a hurdle both financially and for the workforce to be retrained.

Utility

Utility software includes software that is specific-purposed and focused on keeping the infrastructure healthy. Examples include antivirus software to scan and stop computer viruses and disk desegmentation software to optimize files' storage. Over time, some of the popular utilities were absorbed as features of an operating system.

Application or App Software

The second major category of software is application software. While system software focuses on running the computers, application software allows the end-user to accomplish some goals or purposes. Examples include word processing, photo editor, spreadsheet, or a browser. Applications software are grouped in many categories, including:

- Killer app

- Productivity

- Enterprise

- Mobile

The “Killer” App

When a new type of digital device is invented, there are generally a small group of technology enthusiasts who will purchase it just for the joy of figuring out how it works. A “killer” application runs only on one OS platform and becomes so essential that many people will buy a device on that OS platform just to run that application. For the personal computer, the killer application was the spreadsheet. In 1979, VisiCalc, the first personal-computer spreadsheet package, was introduced. It was an immediate hit and drove sales of the Apple II. It also solidified the value of the personal computer beyond the relatively small circle of technology geeks. When the IBM PC was released, another spreadsheet program, Lotus 1-2-3, was the killer app for business users. Today, Microsoft Excel dominates as the spreadsheet program, running on all the popular operating systems.

Productivity Software

Along with the spreadsheet, several other software applications have become standard tools for the workplace. These applications, called productivity software, allow office employees to complete their daily work. Many times, these applications come packaged together, such as in Microsoft’s Office suite. Here is a list of these applications and their basic functions:

- Word processing: This class of software provides for the creation of written documents. Functions include the ability to type and edit text, format fonts and paragraphs, and add, move, and delete text throughout the document. Most modern word-processing programs also have the ability to add tables, images, voice, videos, and various layout and formatting features to the document. Word processors save their documents as electronic files in a variety of formats. The most popular word-processing package is Microsoft Word, which saves its files in the Docx format. This format can be read/written by many other word-processor packages or converted to other formats such as Adobe’s PDF.

- Spreadsheet: This class of software provides a way to do numeric calculations and analysis. The working area is divided into rows and columns, where users can enter numbers, text, or formulas. The formulas make a spreadsheet powerful, allowing the user to develop complex calculations that can change based on the numbers entered. Most spreadsheets also include the ability to create charts based on the data entered. The most popular spreadsheet package is Microsoft Excel, which saves its files in the XLSX format. Just as with word processors, many other spreadsheet packages can read and write to this file format.

- Presentation: This software class provides for the creation of slideshow presentations that can be shared, printed, or projected on a screen. Users can add text, images, audio, video, and other media elements to the slides. Microsoft’s PowerPoint remains the most popular software, saving its files in PPTX format.

- Office Suite: Microsoft popularized the idea of the office-software productivity bundle with their release of Microsoft Office. Some office suites include other types of software. For example, Microsoft Office includes Outlook, its e-mail package, and OneNote, an information-gathering collaboration tool. The professional version of Office also includes Microsoft Access, a database package. (Databases are covered more in chapter 4.) This package continues to dominate the market, and most businesses expect employees to know how to use this software. However, many competitors to Microsoft Office exist and are compatible with Microsoft's file formats (see table below). Microsoft now has a cloud-based version called Microsoft Office 365. Similar to Google Drive, this suite allows users to edit and share documents online utilizing cloud-computing technology.

|

Category Suite: |

Word Processing |

Spreadsheet | Presentation | Other |

|---|---|---|---|---|

| Microsoft Office | Word | Excel | PowerPoint |

Outlook (email), Access (database), OneNote (information gathering) and many other |

| Apple iWork | pages | numbers | keynote | Integrates with iTunes, iCloud, and other Apple software |

| OpenOffice | Writer | Calc | Impress | Base (database), Draw (drawing, Math (equations) |

| Google Drive | Document | Spreadsheet | Presentation | Gmail,(email), Forms (online form data collection, Draw (drawing) and many others. |

Sidebar: “PowerPointed” to Death

As presentation software, specifically Microsoft PowerPoint, has gained acceptance as the primary method to formally present information in a business setting, the art of giving an engaging presentation is becoming rare. Many presenters now just read the bullet points in the presentation and immediately bore those in attendance who can already read it for themselves.

The real problem is not with PowerPoint as much as it is with the person creating and presenting. The book Presentation Zen by Garr Reynolds is highly recommended to anyone who wants to improve their presentation skills.

New opportunities have been presented to make presentation software more effective. One such example is Prezi. Prezi is a presentation tool that uses a single canvas for the presentation, allowing presenters to place text, images, and other media on the canvas and then navigate between these objects as they present.

Enterprise Software

As the personal computer proliferated inside organizations, control over the information generated by the organization began splintering. For example, the customer service department creates a customer database to track calls and problem reports. The sales department also creates a database to keep track of customer information. Which one should be used as the master list of customers? As another example, someone in sales might create a spreadsheet to calculate sales revenue, while someone in finance creates a different one that meets their department's needs. However, the two spreadsheets will likely come up with different totals for revenue. Which one is correct? And who is managing all this information? This type of example presents challenges to management to make effective decisions.

Enterprise Resource Planning

In the 1990s, the need to bring the organization’s information back under centralized control became more apparent. The enterprise resource planning (ERP) system (sometimes just called enterprise software) was developed to bring together an entire organization in one software application. Key characteristics of an ERP include:

- An integrated set of modules: Each module serves different functions in an organization, such as Marketing, Sales, Manufacturing.

- A consistent user interface: An ERP is a software application that provides a common interface across all modules of the ERP and is used by an organization’s employees to access information

- A common database: All users of the ERP edit and save their information from the data source. This means that there is only one customer database, there is only one calculation for revenue, etc.

- Integrated business processes: All users must follow the same business rules and process throughout the entire organization”: ERP systems include functionality that covers all of the essential components of a business, such as how organizations track cash, invoices, purchases, payroll, product development, supply chain.

ERP systems were originally marketed to large corporations, given that they are costly. However, as more and more large companies began installing them, ERP vendors began targeting mid-sized and even smaller businesses. Some of the more well-known ERP systems include those from SAP, Oracle, and Microsoft.

To effectively implement an ERP system in an organization, the organization must be ready to make a full commitment, including the cost to train employees as part of the implementation.

All aspects of the organization are affected as old systems are replaced by the ERP system. In general, implementing an ERP system can take two to three years and several million dollars.

So why implement an ERP system? If done properly, an ERP system can bring an organization a good return on its investment. By consolidating information systems across the enterprise and using the software to enforce best practices, most organizations see an overall improvement after implementing an ERP. Business processes as a form of competitive advantage will be covered in chapter 9.

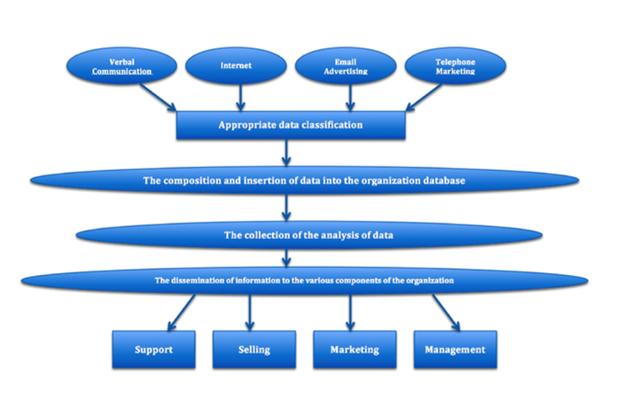

Customer Relationship Management

A customer relationship management (CRM) system is a software application designed to manage customer interactions, including customer service, marketing, and sales. It collects all data about the customers. The objectives of a CRM are:

- Personalize customer relationship to increase customer loyalty

- Improve communication

- Anticipate needs to retain existing or acquire new customers

Some ERP software systems include CRM modules. An example of a well-known CRM package in Salesforce

Supply Chain Management

Many organizations must deal with the complex task of managing their supply chains. At its simplest, a supply chain is a linkage between an organization’s suppliers, its manufacturing facilities, and its products' distributors. Each link in the chain has a multiplying effect on the complexity of the process. For example, if there are two suppliers, one manufacturing facility, and two distributors, then there are 2 x 1 x 2 = 4 links to handle. However, if you add two more suppliers, another manufacturing facility, and two more distributors, then you have 4 x 2 x 4 = 32 links to manage.

A supply chain management (SCM) system manages the interconnection between these links and the products' inventory in their various development stages. The Association provides a full definition of a supply chain management system for Operations Management: “The design, planning, execution, control, and monitoring of supply chain activities to create net value, building a competitive infrastructure, leveraging worldwide logistics, synchronizing supply with demand, and measuring performance globally.” 2 Most ERP systems include a supply chain management module.

Mobile Software

A mobile application, commonly called a mobile app, is a software application programmed to run specifically on a mobile device such as smartphones and tablets.

Smartphones and tablets are becoming a dominant form of computing, with many more smartphones being sold than personal computers. This means that organizations will have to get smart about developing software on mobile devices to stay relevant. With the rise of mobile devices' adoption, the number of apps is exploding in the millions (Forbes.com, 2020), and there is an app for just about anything a user is looking to do. Examples include apps as a flashlight, a step counter, a plant identifier, and games.

Software Creation

We just discussed different types of software and now can ask: How is software created? If the software is the set of instructions that tells the hardware what to do, how are these instructions written? If a computer reads everything as one and zero, do we have to learn how to write software that way? Thankfully, another software type is written, especially for software developers to write system software and applications - called programming languages. The people who can program are called computer programmers or software developers.

Analogous to a human language, a programming language consists of keywords, comments, symbols, and grammatical rules to construct statements as valid instructions understandable by the computer to perform certain tasks. Using this language, a programmer writes a program (called the source code). Another software then processes the source code to convert the programming statements to a machine-readable form, the ones, and zeroes necessary to execute the CPU. This conversion process is often known as compiling, and the software is called the compiler. Most of the time, programming is done inside a programming environment; when you purchase a copy of Visual Studio from Microsoft; It provides the developers with an editor to write the source code, a compiler, and help for many of Microsoft’s programming languages. Examples of well-known programming languages today include Java, PHP, and C's various flavors (Visual C, C++, C#.)

Convert a computer program to an executable. Image by Ly-Huong T. Pham is licensed under CC-BY-NC

Thousands of programming languages have been created since the first programming language in 1883 by a woman named Ada Lovelace. One of the earlier English-like languages called COBOL has been in use since the 1950s to the present time in services that we still use today, such as payroll, reservation systems. The C programming language was introduced in the 1970s and remained a top popular choice. Some new languages such as C#, Swift are gaining momentum as well. Programmers select the best-matched language with the problem to be solved for a particular OS platform. For example, languages such as HTML and JavaScript are used to develop web pages.

It is hard to determine which language is the most popular since it varies. However, according to TIOBE Index, one of the companies that rank the popularity of the programming languages monthly, the top five in August 2020 are C, Java, Python, C++, and C# (2020). For more information on this methodology, please visit the TIOBE definition page. For those who wish to learn more about programming, Python is a good first language to learn because not only is it a modern language for web development, it is simple to learn and covers many fundamental concepts of programming that apply to other languages.

One person can write some programs. However, most software programs are written by many developers. For example, it takes hundreds of software engineers to write Microsoft Windows or Excel. To ensure teams can deliver timely and quality software with the least amount of errors, also known as bugs, formal project management methodologies are used.

Open-Source vs. Closed-Source Software

When the personal computer was first released, computer enthusiasts immediately banded together to build applications and solve problems. These computer enthusiasts were happy to share any programs they built and solutions to problems they found; this collaboration enabled them to innovate more quickly and fix problems.

As software began to become a business, however, this idea of sharing everything fell out of favor for some. When a software program takes hundreds of hours to develop, it is understandable that the programmers do not want to give it away. This led to a new business model of restrictive software licensing, which required payment for software to the owner, a model that is still dominant today. This model is sometimes referred to as closed source, as the source code remains private property and is not made available to others. Microsoft Windows, Excel, Apple iOS are examples of closed source software.

There are many, however, who feel that software should not be restricted. Like those early hobbyists in the 1970s, they feel that innovation and progress can be made much more rapidly if we share what we learn. In the 1990s, with Internet access connecting more and more people, the open-source movement gained steam.

Open-source software is software that has the source code available for anyone to copy and use. For non-programmers, it won’t be of much use unless the compiled format is also made available for users to use. However, for programmers, the open-source movement has led to developing some of the world's most-used software, including the Firefox browser, the Linux operating system, and the Apache webserver.

Just about every type of commercial product has an open source equivalent. SourceForge.net lists over two hundred and thirty thousand such products1! Many of these products come with the installation tools, support utilities, and full documentation that make them difficult to distinguish from traditional commercial efforts (Woods, 2008). In addition to the LAMP products, some major examples include the following:

- Firefox—a Web browser that competes with Internet Explorer

- OpenOffice—a competitor to Microsoft Office

- Gimp—a graphic tool with features found in Photoshop

- Alfresco—collaboration software that competes with Microsoft Sharepoint and EMC’s Documentum

- Marketcetera—an enterprise trading platform for hedge fund managers that competes with FlexTrade and Portware

- Zimbra—open source e-mail software that competes with Outlook server

- MySQL, Ingres, and EnterpriseDB—open source database software packages that each go head-to-head with commercial products from Oracle, Microsoft, Sybase, and IBM

- SugarCRM—customer relationship management software that competes with Salesforce.com and Siebel

- Asterix—an open source implementation for running a PBX corporate telephony system that competes with offerings from Nortel and Cisco, among others

- Free BSD and Sun’s OpenSolaris—open source versions of the Unix operating sys

Some people are concerned that open-source software can be vulnerable to security risks since the source code is available. Others counter that because the source code is freely available, many programmers have contributed to open-source software projects, making the code less buggy and adding features, and fixing bugs much faster than closed-source software.

Many businesses are wary of open-source software precisely because the code is available for anyone to see. They feel that this increases the risk of an attack. Others counter that this openness decreases the risk because the code is exposed to thousands of programmers who can incorporate code changes to patch vulnerabilities quickly.

In summary, some benefits of the open-source model are:

- The software is available for free: Free alternatives to costly commercial code can be a tremendous motivator, particularly since conventional software often requires customers to pay for every copy used and to pay more for software that runs on increasingly powerful hardware. Big Lots stores lowered costs by as much as $10 million by finding viable OSS (Castelluccio, 2008) to serve their system needs. Online broker E*TRADE estimates that its switch to open source helped save over $13 million a year (King, 2008). And Amazon claimed in SEC filings that the switch to open source was a key contributor to nearly $20 million in tech savings (Shankland, et. al., 2001). Firms like TiVo, which use OSS in their own products, eliminate a cost spent either developing their own operating system or licensing similar software from a vendor like Microsoft.

- Reliability: There’s a saying in the open source community, “Given enough eyeballs, all bugs are shallow” (Raymond, 1999). What this means is that the more people who look at a program’s code, the greater the likelihood that an error will be caught and corrected. The open source community harnesses the power of legions of geeks who are constantly trawling OSS products, looking to squash bugs and improve product quality. And studies have shown that the quality of popular OSS products outperforms proprietary commercial competitors (Ljungberg, 2000). In one study, Carnegie Mellon University’s Cylab estimated the quality of Linux code to be less buggy than commercial alternatives by a factor of two hundred (Castelluccio, 2008)!

- Security: OSS advocates also argue that by allowing “many eyes” to examine the code, the security vulnerabilities of open source products come to light more quickly and can be addressed with greater speed and reliability (Wheeler, 2003). High profile hacking contests have frequently demonstrated the strength of OSS products. In one well-publicized 2008 event, laptops running Windows and Macintosh were both hacked (the latter in just two minutes), while a laptop running Linux remained uncompromised (McMillan, 2008). Government agencies and the military often appreciate the opportunity to scrutinize open source efforts to verify system integrity (a particularly sensitive issue among foreign governments leery of legislation like the USA PATRIOT Act of 2001) (Lohr, 2003). Many OSS vendors offer security focused (sometimes called hardened) versions of their products. These can include systems that monitor the integrity of an OSS distribution, checking file size and other indicators to be sure that code has not been modified and redistributed by bad guys who’ve added a back door, malicious routines, or other vulnerabilities.

- Scalability: Many major OSS efforts can run on everything from cheap commodity hardware to high-end supercomputing. Scalability allows a firm to scale from start-up to blue chip without having to significantly rewrite their code, potentially saving big on software development costs. Not only can many forms of OSS be migrated to more powerful hardware, packages like Linux have also been optimized to balance a server’s workload among a large number of machines working in tandem. Brokerage firm E*TRADE claims that usage spikes following 2008 U.S. Federal Reserve moves flooded the firm’s systems, creating the highest utilization levels in five years. But E*TRADE credits its scalable open source systems for maintaining performance while competitors’ systems struggled (King, 2008).

- Agility and Time to Market: Vendors who use OSS as part of product offerings may be able to skip whole segments of the software development process, allowing new products to reach the market faster than if the entire software system had to be developed from scratch, in-house. Motorola has claimed that customizing products built on OSS has helped speed time-to-market for the firm’s mobile phones, while the team behind the Zimbra e-mail and calendar effort built their first product in just a few months by using some forty blocks of free code (Guth, 2006).

- The software source code is available: It can be examined and reviewed before it is installed.

- Quick Updates: The large community of programmers who work on open-source projects leads to quick bug-fixing and feature additions.

Some benefits of the closed-source model are:

- Providing a financial incentive for software developers or companies

- Technical support from the company that developed the software.

Today there are thousands of open-source software applications available for download. An example of open-source productivity software is Open Office Suite. One good place to search for open-source software is sourceforge.net, where thousands of software applications are available for free download.

Why Give It Away? The Business of Open Source

Open source is a sixty-billion-dollar industry (Asay, 2008), but it has a disproportionate impact on the trillion-dollar IT market. By lowering the cost of computing, open source efforts make more computing options accessible to smaller firms. More reliable, secure computing also lowers costs for all users. OSS also diverts funds that firms would otherwise spend on fixed costs, like operating systems and databases, so that these funds can be spent on innovation or other more competitive initiatives. Think about Google, a firm that some estimate has over 1.4 million servers. Imagine the costs if it had to license software for each of those boxes!

Commercial interest in OSS has sparked an acquisition binge. Red Hat bought open source application server firm JBoss for $350 million. Novell snapped up SUSE Linux for $210 million. And Sun plunked down over $1 billion for open source database provider MySQL (Greenberg, 2008). And with Oracle’s acquisition of Sun, one of the world’s largest commercial software firms has zeroed in on one of the deepest portfolios of open source products.

But how do vendors make money on open source? One way is by selling support and consulting services. While not exactly Microsoft money, Red Hat, the largest purely OSS firm, reported half a billion dollars in revenue in 2008. The firm had two and a half million paid subscriptions offering access to software updates and support services (Greenberg, 2008). Oracle, a firm that sells commercial ERP and database products, provides Linux for free, selling high-margin Linux support contracts for as much as five hundred thousand dollars (Fortt, 2007). The added benefit for Oracle? Weaning customers away from Microsoft—a firm that sells many products that compete head-to-head with Oracle’s offerings. Service also represents the most important part of IBM’s business. The firm now makes more from services than from selling hardware and software (Robertson, 2009). And every dollar saved on buying someone else’s software product means more money IBM customers can spend on IBM computers and services. Sun Microsystems was a leader in OSS, even before the Oracle acquisition bid. The firm has used OSS to drive advanced hardware sales, but the firm also sells proprietary products that augment its open source efforts. These products include special optimization, configuration management, and performance tools that can tweak OSS code to work its best (Preimesberger, 2008).

Here’s where we also can relate the industry’s evolution to what we’ve learned about standards competition in our earlier chapters. In the pre-Linux days, nearly every major hardware manufacturer made its own, incompatible version of the Unix operating system. These fractured, incompatible markets were each so small that they had difficulty attracting third-party vendors to write application software. Now, much to Microsoft’s dismay, all major hardware firms run Linux. That means there’s a large, unified market that attracts software developers who might otherwise write for Windows.

To keep standards unified, several Linux-supporting hardware and software firms also back the Linux Foundation, the nonprofit effort where Linus Torvalds serves as a fellow, helping to oversee Linux’s evolution. Sharing development expenses in OSS has been likened to going in on a pizza together. Everyone wants a pizza with the same ingredients. The pizza doesn’t make you smarter or better. So why not share the cost of a bigger pie instead of buying by the slice (Cohen, 2008)? With OSS, hardware firms spend less money than they would in the brutal, head-to-head competition where each once offered a “me too” operating system that was incompatible with rivals but offered little differentiation. Hardware firms now find their technical talent can be deployed in other value-added services mentioned above: developing commercial software add-ons, offering consulting services, and enhancing hardware offerings.

Linux on the Desktop?

While Linux is a major player in enterprise software, mobile phones, and consumer electronics, the Linux OS can only be found on a tiny fraction of desktop computers. There are several reasons for this. Some suggest Linux simply isn’t as easy to install and use as Windows or the Mac OS. This complexity can raise the total cost of ownership (TCO) of Linux desktops, with additional end-user support offsetting any gains from free software. The small number of desktop users also dissuades third party firms from porting popular desktop applications over to Linux. For consumers in most industrialized nations, the added complexity and limited desktop application availability of desktop Linux just it isn’t worth the one to two hundred dollars saved by giving up Windows.

But in developing nations where incomes are lower, the cost of Windows can be daunting. Consider the OLPC, Nicholas Negroponte’s “one-hundred-dollar” laptop. An additional one hundred dollars for Windows would double the target cost for the nonprofit’s machines. It is not surprising that the first OLPC laptops ran Linux. Microsoft recognizes that if a whole generation of first-time computer users grows up without Windows, they may favor open source alternatives years later when starting their own businesses. As a result, Microsoft has begun offering low-cost versions of Windows (in some cases for as little as seven dollars) in nations where populations have much lower incomes. Microsoft has even offered a version of Windows to the backers of the OLPC. While Microsoft won’t make much money on these efforts, the low cost versions will serve to entrench Microsoft products as standards in emerging markets, staving off open source rivals and positioning the firm to raise prices years later when income levels rise.

MySQL: Turning a Ten-Billion-Dollars-a-Year Business into a One-Billion-Dollar One

Finland is not the only Scandinavian country to spawn an open source powerhouse. Uppsala Sweden’s MySQL (pronounced “my sequel”) is the “M” in the LAMP stack, and is used by organizations as diverse as FedEx, Lufthansa, NASA, Sony, UPS, and YouTube.

The “SQL” in name stands for the structured query language, a standard method for organizing and accessing data. SQL is also employed by commercial database products from Oracle, Microsoft, and Sybase. Even Linux-loving IBM uses SQL in its own lucrative DB2 commercial database product. Since all of these databases are based on the same standard, switching costs are lower, so migrating from a commercial product to MySQL’s open source alternative is relatively easy. And that spells trouble for commercial firms. Granted, the commercial efforts offer some bells and whistles that MySQL doesn’t yet have, but those extras aren’t necessary in a lot of standard database use. Some organizations, impressed with MySQL’s capabilities, are mandating its use on all new development efforts, attempting to cordon off proprietary products in legacy code that is maintained but not expanded.

Savings from using MySQL can be huge. The Web site PriceGrabber pays less than ten thousand dollars in support for MySQL compared to one hundred thousand to two hundred thousand dollars for a comparable Oracle effort. Lycos Europe switched from Oracle to MySQL and slashed costs from one hundred twenty thousand dollars a year to seven thousand dollars. And the travel reservation firm Sabre used open source products such as MySQL to slash ticket purchase processing costs by 80 percent (Lyons, 2004).

MySQL does make money, just not as much as its commercial rivals. While you can download a version of MySQL over the Net, the flagship product also sells for four hundred ninety-five dollars per server computer compared to a list price for Oracle that can climb as high as one hundred sixty thousand dollars. Of the roughly eleven million copies of MySQL in use, the company only gets paid for about one in a thousand (Ricadela, 2007). Firms pay for what’s free for one of two reasons: (1) for MySQL service, and (2) for the right to incorporate MySQL’s code into their own products (Kirkpatrick, 2004). Amazon, Facebook, Gap, NBC, and Sabre pay MySQL for support; Cisco, Ericsson, HP, and Symantec pay for the rights to the code (Ricadela, 2007). Top-level round-the-clock support for MySQL for up to fifty servers is fifty thousand dollars a year, still a fraction of the cost for commercial alternatives. Founder Marten Mickos has stated an explicit goal of the firm is “turning the $10-billion-a-year database business into a $1 billion one” (Kirkpatrick, 2004).

When Sun Microsystems spent over $1 billion to buy Mickos’ MySQL in 2008, Sun CEO Jonathan Schwartz called the purchase the “most important acquisition in the company’s history” (Shankland, 2008). Sun hoped the cheap database software could make the firm’s hardware offerings seem more attractive. And it looked like Sun was good for MySQL, with the product’s revenues growing 55 percent in the year after the acquisition (Asay, 2009).

But here’s where it gets complicated. Sun also had a lucrative business selling hardware to support commercial ERP and database software from Oracle. That put Sun and partner Oracle in a relationship where they were both competitors and collaborators (the “coopetition” or “frenemies” phenomenon mentioned in Chapter 6 “Understanding Network Effects”). Then in spring 2009, Oracle announced it was buying Sun. Oracle CEO Larry Ellison mentioned acquiring the Java language was the crown jewel of the purchase, but industry watchers have raised several questions. Will the firm continue to nurture MySQL and other open source products, even as this software poses a threat to its bread-and-butter database products? Will the development community continue to back MySQL as the de facto standard for open source SQL databases, or will they migrate to an alternative? Or will Oracle find the right mix of free and fee-based products and services that allow MySQL to thrive while Oracle continues to grow? The implications are serious for investors, as well as firms that have made commitments to Sun, Oracle, and MySQL products. The complexity of this environment further demonstrates why technologists need business savvy and market monitoring skills and why business folks need to understand the implications of technology and tech-industry developments.

Legal Risks and Open Source Software: A Hidden and Complex Challenge

Open source software isn’t without its risks. Competing reports cite certain open source products as being difficult to install and maintain (suggesting potentially higher total cost of ownership, or TCO). Adopters of OSS without support contracts may lament having to rely on an uncertain community of volunteers to support their problems and provide innovative upgrades. Another major concern is legal exposure. Firms adopting OSS may be at risk if they distribute code and aren’t aware of the licensing implications. Some commercial software firms have pressed legal action against the users of open source products when there is a perceived violation of software patents or other unauthorized use of their proprietary code.

For example, in 2007 Microsoft suggested that Linux and other open source software efforts violated some two hundred thirty-five of its patents (Ricadela, 2007). The firm then began collecting payments and gaining access to the patent portfolios of companies that use the open source Linux operating system in their products, including Fuji, Samsung, and Xerox. Microsoft also cut a deal with Linux vendor Novell in which both firms pledged not to sue each other’s customers for potential patent infringements.

Also complicating issues are the varying open source license agreements (these go by various names, such as GPL and the Apache License), each with slightly different legal provisions—many of which have evolved over time. Keeping legal with so many licensing standards can be a challenge, especially for firms that want to bundle open source code into their own products (Lacy, 2006). An entire industry has sprouted up to help firms navigate the minefield of open source legal licenses. Chief among these are products, such as those offered by the firm Black Duck, which analyze the composition of software source code and report on any areas of concern so that firms can honor any legal obligations associated with their offerings. Keeping legal requires effort and attention, even in an environment where products are allegedly “free.” This also shows that even corporate lawyers had best geek-up if they want to prove they’re capable of navigating a twenty-first-century legal environment.

Software Licenses

The companies or developers own the software they create. The software is protected by law either through patents, copyright, or licenses. It is up to the software owners to grant their users the right to use the software through the terms of the licenses.

For closed-source vendors, the terms vary depending on the price the users are willing to pay. Examples include single user, single installation, multi-users, multi-installations, per network, or machine.

They have specific permission levels for open-source vendors to grant using the source code and set the modified version conditions. Examples include free to distribute, remix, adapt for non-commercial use but with the condition that the newly revised source code must also be licensed under identical terms. While open-source vendors don’t make money by charging for their software, they generate revenues through donations or selling technical support or related services. For example, Wikipedia is a widely popular and online free-content encyclopedia used by millions of users. Yet, it relies mainly on donations to sustain its staff and infrastructure.

There Are Now 8.9 Million Mobile Apps, And China Is 40% Of Mobile App Spending (2020, Feb 28). Retrieved September 4, 2020, from https://www.forbes.com/

Cloud Computing

Historically, for software to run on a computer, an individual copy of the software had to be installed on the computer, either from a disk or, more recently, after being downloaded from the Internet. The concept of “cloud” computing changes this model.

“The cloud” refers to applications, services, and data stored in data centers, server farms, and storage servers and accessed by users via the Internet. In most cases, the users don’t know where their data is actually stored. Individuals and organizations use cloud computing.

You probably already use cloud computing in some forms. For example, if you access your email via your web browser, you are using a form of cloud computing. If you use Google Drive’s applications, you are using cloud computing. Simultaneously, these are free versions of cloud computing, big business in providing applications and data storage over the web. Commercial and large applications can also exist on the cloud, such as the entire suite of CRM from Salesforce is offered via the cloud. Cloud computing is not limited to web applications: it can also be used for phone or video streaming services.

Advantages of Cloud Computing

- No software to install or upgrades to maintain.

- Available from any computer that has access to the Internet.

- Can scale to a large number of users easily.

- New applications can be up and running very quickly.

- Services can be leased for a limited time on an as-needed basis.

- Your information is not lost if your hard disk crashes or your laptop is stolen.

- You are not limited by the available memory or disk space on your computer.

Disadvantages of Cloud Computing

- You must have Internet access to use it. If you do not have access, you’re out of luck.

- You are relying on a third party to provide these services.

- You don’t know how your data is protected from theft or sold by your own cloud service provider.

Cloud computing can greatly impact how organizations manage technology. For example, why is an IT department needed to purchase, configure, and manage personal computers and software when all that is really needed is an Internet connection?

Using a Private Cloud

Many organizations are understandably nervous about giving up control of their data and applications using cloud computing. But they also see the value in reducing the need for installing software and adding disk storage to local computers. A solution to this problem lies in the concept of a private cloud. While there are various private cloud models, the basic idea is for the cloud service provider to rent a specific portion of their server space exclusive to a specific organization. The organization has full control over that server space while still gaining some of the benefits of cloud computing.

Cloud Emerging Technology/Current Trend

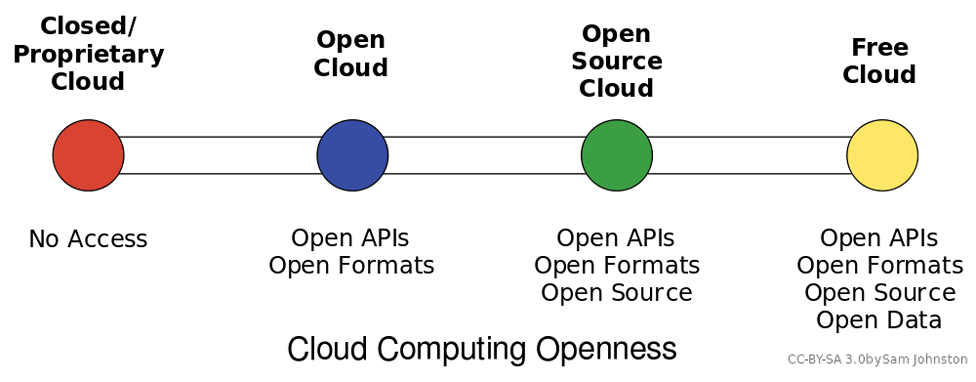

Cloud computing can be loosely defined as the allocation of hardware and/or software under a service model (resources are assigned and consumed as needed). Typically, what we hear today referred to as cloud computing is the concept of business-to-business commerce revolving around “Company A” selling or renting their services to “Company B” over the Internet. A cloud can be public (hosted on a public internet, shared among consumers) or private (cloud concepts of provisioning and storage are applied to servers within a fire wall or internal network that is privately managed), and can also fall into some smaller subsets in between, as depicted in the graphic above.

Under Infrastructure as a Service (IaaS) computing model, which is what is most commonly associated with the term cloud computing, one or more servers with significant amounts of processing power, capacity, and memory, are configured through hardware and/or software methods to act as though they are multiple smaller systems that add up to their capacity. This is referred to as virtualizing, or virtual servers. These systems can be “right sized” where they only consume the resources they need on average, meaning many systems needing little resources can reside on one piece of hardware. When processing demands of one system expand or contract, resources from that server can be added or removed to account for the change. This is an alternative to multiple physical servers, where each would need the ability to serve not only the average but expected peak needs of system resources.

Software as a Service, Platform as a Service, and the ever-expanding list of “as-a-service” models follow the same basic pattern of balancing time and effort. Platforms as a service allow central control of end user profiles, and software as a service allows simplified (and/or automated) updating of programs and configurations. Storage as a service can replace the need to manually process backups and file server maintenance. Effectively, each “as-a-service” strives to provide the end user with an “as-good-if-not-better” alternative to managing a system themselves, all while trying to keep the cost of their services less than a self-managed solution.

Virtualization

The reduced costs and increased power of commodity hardware are not the only contributors to the explosion of cloud computing. The availability of increasingly sophisticated software tools has also had an impact. Perhaps the most important software tool in the cloud computing toolbox is virtualization. Virtualization is using software to create a virtual machine that simulates a computer with an operating system. For example, using virtualization, a single computer that runs Microsoft Windows can host a virtual machine that looks like a computer with a specific Linux-based OS. This ability maximizes the use of available resources on a single machine. Companies such as EMC provide virtualization software that allows cloud service providers to provision web servers to their clients quickly and efficiently. Organizations are also implementing virtualization to reduce the number of servers needed to provide the necessary services. For more detail on how virtualization works, see this informational page from VMWare.

Think of virtualization as being a kind of operating system for operating systems. A server running virtualization software can create smaller compartments in memory that each behave as a separate computer with its own operating system and resources. The most sophisticated of these tools also allow firms to combine servers into a huge pool of computing resources that can be allocated as needed (Lyons, 2008).

Virtualization can generate huge savings. Some studies have shown that on average, conventional data centers run at 15 percent or less of their maximum capacity. Data centers using virtualization software have increased utilization to 80 percent or more (Katz, 2009).This increased efficiency means cost savings in hardware, staff, and real estate. Plus it reduces a firm’s IT-based energy consumption, cutting costs, lowering its carbon footprint, and boosting “green cred” (Castro, 2007). Using virtualization, firms can buy and maintain fewer servers, each running at a greater capacity. It can also power down servers until demand increases require them to come online.

While virtualization is a key software building block that makes public cloud computing happen, it can also be used in-house to reduce an organization’s hardware needs, and even to create a firm’s own private cloud of scalable assets. Bechtel, BT, Merrill Lynch, and Morgan Stanley are among the firms with large private clouds enabled by virtualization (Brodkin, 2008). Another kind of virtualization, virtual desktops allow a server to run what amounts to a copy of a PC—OS, applications, and all—and simply deliver an image of what’s executing to a PC or other connected device. This allows firms to scale, back up, secure, and upgrade systems far more easily than if they had to maintain each individual PC. One game start-up hopes to remove the high-powered game console hardware attached to your television and instead put the console in the cloud, delivering games to your TV as they execute remotely on superfast server hardware. Virtualization can even live on your desktop. Anyone who’s ever run Windows in a window on Mac OS X is using virtualization software; these tools inhabit a chunk of your Mac’s memory for running Windows and actually fool this foreign OS into thinking that it’s on a PC.

Interest in virtualization has exploded in recent years. VMware, the virtualization software division of storage firm EMC, was the biggest IPO of 2007. But its niche is getting crowded. Microsoft has entered the market, building virtualization into its server offerings. Dell bought a virtualization software firm for $1.54 billion. And there’s even an open source virtualization product called Xen (Castro, 2007).

Virtualization Emerging Technology/Curent Trend

Server virtualization is the act of running multiple operating systems and other software on the same physical hardware at the same time, as we discussed in Cloud Computing. A hardware and/or software element is responsible for managing the physical system resources at a layer in between each of the operating systems and the hardware itself. Doing so allows the consolidation of physical equipment into fewer devices, and is most beneficial when the servers sharing the hardware are unlikely to demand resources at the same time, or when the hardware is powerful enough to serve all of the installations simultaneously.

The act of virtualizing is not just for use in cloud environments, but can be used to decrease the “server sprawl,” or overabundance of physical servers, that can occur when physical hardware is installed on a one-to-one (or few-to-one) scale to applications and sites being served. Special hardware and/or software is used to create a new layer in between the physical resources of your computer and the operating system(s) running on it. This layer manages what each system sees as being the hardware available to it, and manages allocation of resources and the settings for all virtualized systems. Hardware virtualization, or the stand alone approach, sets limits for each operating system and allows them to operate independent of one another. Since hardware virtualization does not require a separate operating system to manage the virtualized system(s), it has the potential to operate faster and consume fewer resources than software virtualization. Software virtualization, or the host-guest approach, requires the virtualizing software to run on an operating system already in use, allowing simpler management to occur from the initial operating system and virtualizing program, but can be more demanding on system resources even when the primary operating system is not being used.

Ultimately, you can think of virtualization like juggling. In this analogy, your hands are the servers, and the balls you juggle are your operating systems. The traditional approach of hosting one application on one server is like holding one ball in each hand. If your hands are both “busy” holding a ball, you cannot interact with anything else without putting a ball down. If you juggle them, however, you can “hold” three or more balls at the same time. Each time your hand touches a ball is akin to a virtualized system needing resources, and having those resources allocated by the virtualization layer (the juggler) assigning resources (a hand), and then reallocating for the next system that needs them.

The addition of a virtual machine as shown above allows the hardware or software to see the virtual machine as part of the regular system. The monitor itself divides the resources allocated to it into subsets that act as their own computers.

Summary

The software gives the instructions that tell the hardware what to do. There are two basic categories of software: operating systems and applications. Operating systems provide access to the computer hardware and make system resources available. Application software is designed to meet a specific goal. Productivity software is a subset of application software that provides basic business functionality to a personal computer: word processing, spreadsheets, and presentations. An ERP system is a software application with a centralized database that is implemented across the entire organization. Cloud computing is a software delivery method that runs on any computer with a web browser and access to the Internet. Software is developed through a process called programming, in which a programmer uses a programming language to put together the logic needed to create the program. The software can be an open-source or a closed-source model, and users or developers are granted different licensing terms.

Learn more

Keywords, search terms: Cloud computing, virtualization,

virtual machines (VMs), software virtualization, hardware virtualization

Xen and the Art of Virtualization: http://li8-68.members.linode.com/~caker/xen/2003-xensosp.pdf

Virtualization News and Community: http://www.virtualization.net

Cloud Computing Risk Assessment: http://www.enisa.europa.eu/activities/risk-management/files/deliverables/cloud-computing-risk-assessment

Without formal legislation, judges and juries are placed in positions where they establish precedence by ruling on these issues, while having little guidance from existing law. As recently as March 2012 a file sharing case from 2007 reached the Supreme Court, where the defendant was challenging the constitutionality of a $222,000 USD fine for illegally sharing 24 songs on file sharing service Kazaa. This was the first case for such a lawsuit heard by a jury in the United States. Similar trials have varied in penalties up to $1.92 million US dollars, highlighting a lack of understanding of how to monetize damages. The Supreme Court denied hearing the Kazaa case, which means the existing verdict will stand for now. Many judges are now dismissing similar cases that are being brought by groups like the Recording Industry Association of America (RIAA10), as these actions are more often being seen as the prosecution using the courts as a means to generate revenue and not recover significant, demonstrable damages.

As these cases continue to move through courts and legislation continues to develop at the federal level, those decisions will have an impact on what actions are considered within the constructs of the law, and may have an effect on the contents or location of your site.